Jeremy Fernsler began his career in visual effects over 20 years ago. He joined DNEG in 2017. His filmography includes projects such as STAR TREK: DISCOVERY, THE DARK CRYSTAL: THE AGE OF RESISTANCE and RUNAWAYS.

What is your background?

I graduated from film school in the late 90’s, spent some time in NYC working as a 3d artist for TV and commercials before getting into feature VFX work. Around that time there just weren’t many places in New York doing feature work, so it was an interesting time with everyone learning the ropes at once. For most of my artist career, I’ve been a generalist in both 3D and 2D, working across the entire pipeline – often at small places where you have to wear a lot of hats to get things done. I then I stepped a bit sideways away from the industry for several years to teach animation and visual effects full-time at a university. In 2016, the opportunity at DNEG came up. We moved the family across the country and I started at DNEG LA when there were only a handful of people there. As our team grew and we started taking on more shows at once I think my diverse background set me up to take on more supervisory roles there, and eventually looking after DNEG’s WESTWORLD work.

How did you and DNEG get involved on this show?

We first worked with Jay Worth and the WESTWORLD team when we tackled the robotic bulls for the Season 2 Super Bowl trailer. Our work on that lead us picking up work on Season 2 and established DNEG as both a very reliable and highly capable team. When production started on Season 3, we were called in early to bid on larger batches of work for the whole season.

How was the collaboration with the showrunner, the directors and VFX Supervisor Jay Worth?

Our experience with the whole WESTWORLD team was great. Jay, in particular, is very good about communicating what he’s after and what the team wants to see. Production put a lot of work into practical FX on location and Jay would always let us know when we absolutely had to retain that work and when we could take it over.

The team had very high expectations, but they know when something is going to be difficult. If we started to hit a wall while working around something that was in-camera, we would work up a solution and present it. Jay and Jonah knew that we had a strong team and we were all on the same page about making the show look as good as possible. They were open to new ideas and appreciated when we would take a shot at something new, all while standing firm on their vision for the show. Overall I think it all went very well.

What were their expectations and approach to the visual effects?

The WESTWORLD team has high expectations for the work and for everyone’s commitment to making the show look as good as it possibly can. We weren’t working to make things look ‘pretty’, our mandate was that it should all be completely grounded. To that end, we also did our best to maintain as much of the practical, in-camera work as possible. For the most part, we succeeded in that, although as the shots come together you really start to see the need for augmentation or a bit more energy in some places to cement the shot in place.

How did you organize the work with your VFX Producer and at the DNEG offices?

We were awarded all of the non-host robot characters in season 3, and we knew all of the character animation work would be done in Vancouver. The animation supervisor was Ben Wiggs and we stayed in constant contact about notes, progress, and ideas during the show. Our producer, Brandon Grabowski, and I divided the work up to try to find the best fits we could across a few of our offices. The compositing, lighting, and FX teams in Los Angeles were all very strong – we kept as much of that here as possible. The character animation work mostly went through the Vancouver office – those files were then passed to the LA lighting and FX team, then the final comp would get finished here as well. Much of the animation and FX work was so intertwined there would be a lot of passing back and forth, but everyone adapted very well and the workflows that evolved became pretty efficient. We also had a very talented crew in the Chennai, India office that we could pass work to. Because of the time difference, we tried to pass them work that could be fully contained in their office, from anim to final comp. We had many early morning or late evening phone calls with them to stay on top of things and again that went really quite well. I have to give a shout out to DNEG’s pipeline team for maintaining such a large, global infrastructure that keeps everyone on the right track.

How did you work with the art department to design the various robots?

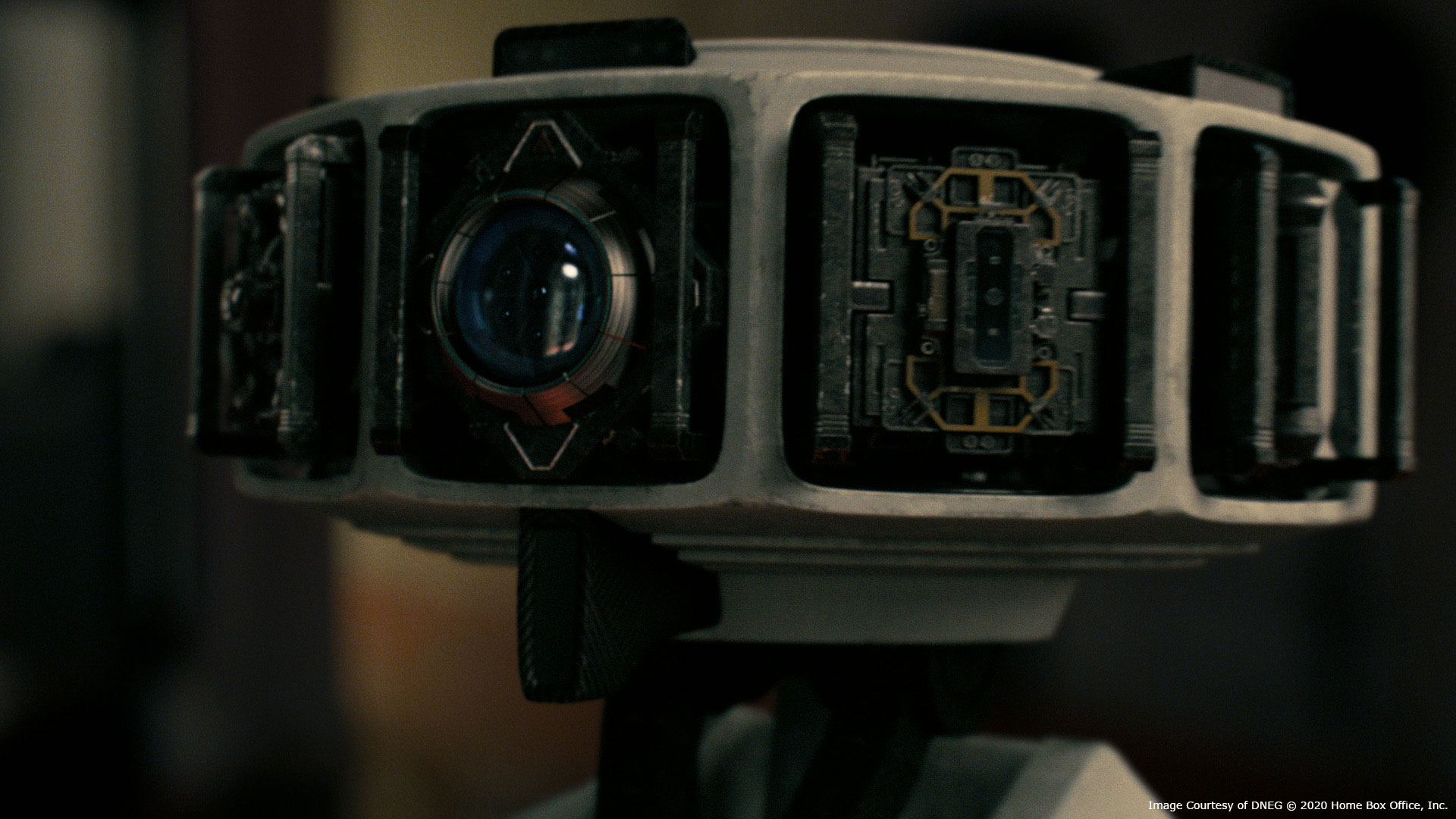

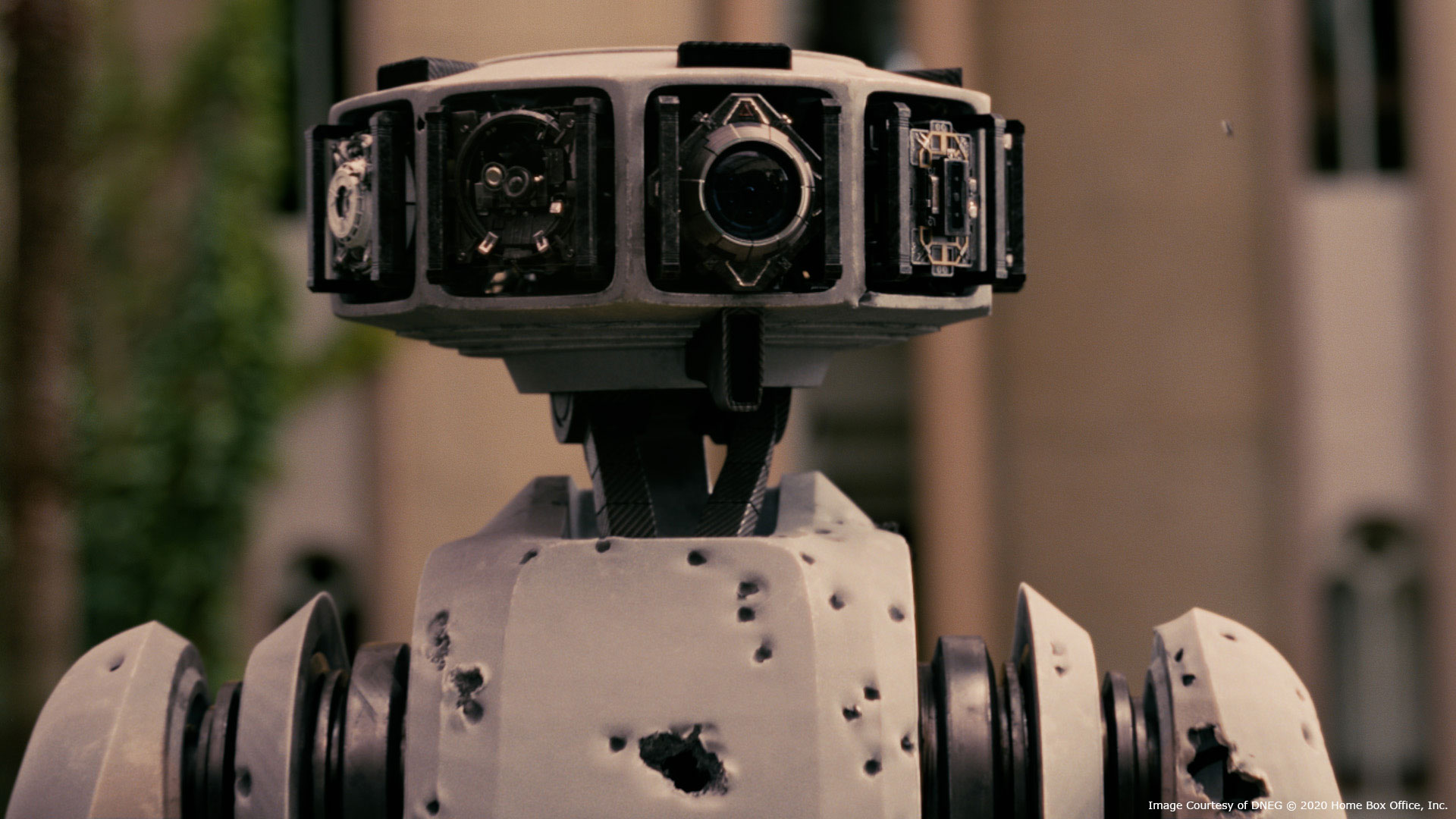

WESTWORLD’s art department would hand us renders, paintovers, and 3D models of their concept work. We would also get direction from Jay about elements of the concept that Jonah was particularly keen on. We’d pull all of that into our pipeline and then begin the conversation about what would and wouldn’t work in each part of the robot and propose solutions along the way. Our build and animation teams would work closely together to identify joints or geometry that may limit the range of motion and make modifications that stayed as true as possible to the original work. Other parts of the bots wouldn’t be as fleshed out – for example, Harriet’s design (the laboratory robot) had a very different head, but they were happy with the body. We were given a lot of freedom to figure that out and Daniel Kumiega, our 3D supervisor in LA, just crushed it with the design and build. Probably the robot that evolved the most was the mech. The original artwork had the spirit of what we would go after, however the joint design and layout of that robot was very tricky in order to ensure that it retained it’s boxy feel, had an appropriate range of motion, and could theoretically pack up into 5 boxes. Many of these tweaks and changes would happen right up until the moment we absolutely had to lock it all down and deliver the shot.

Can you elaborate in detail on the creation of these robots?

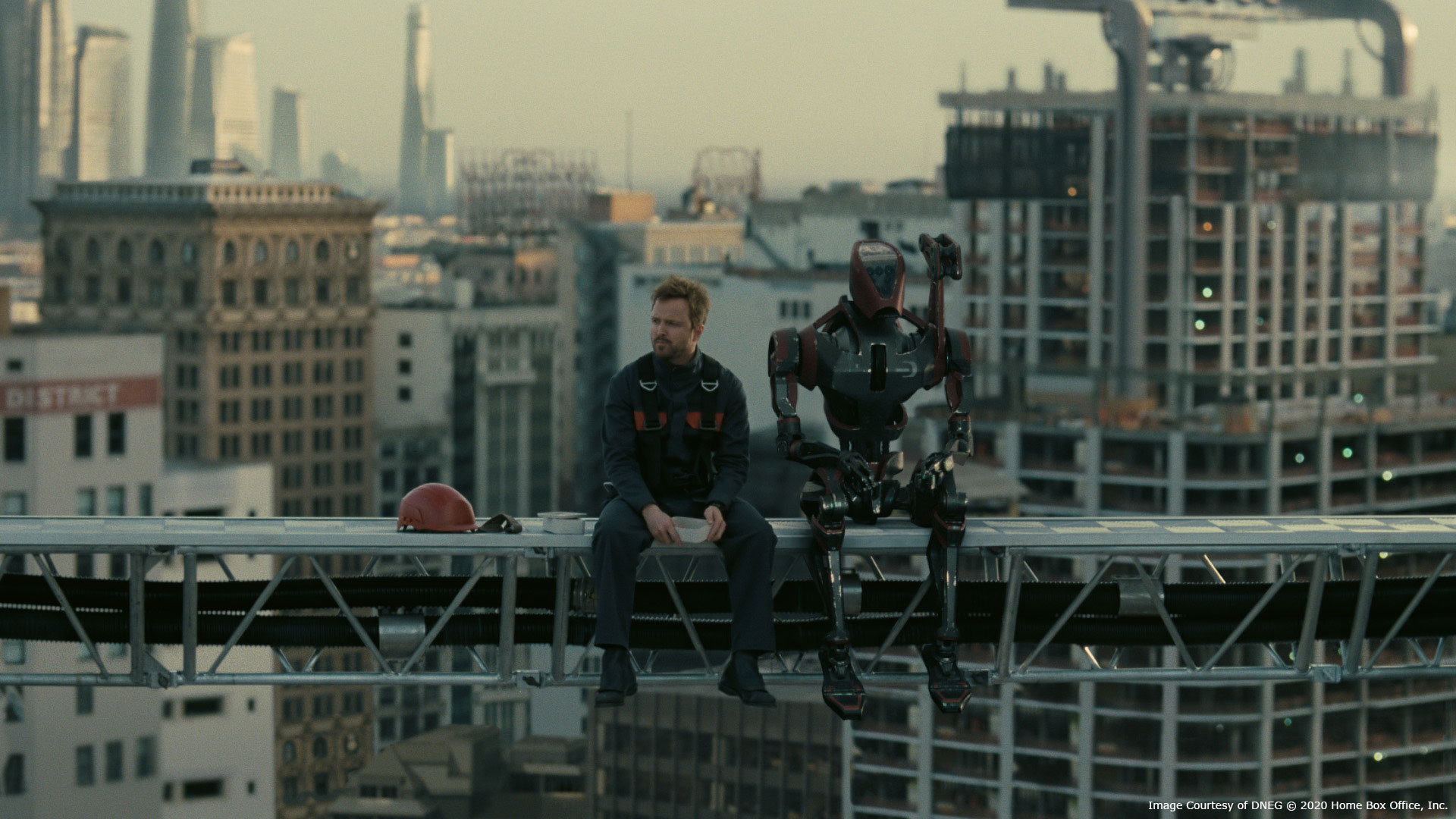

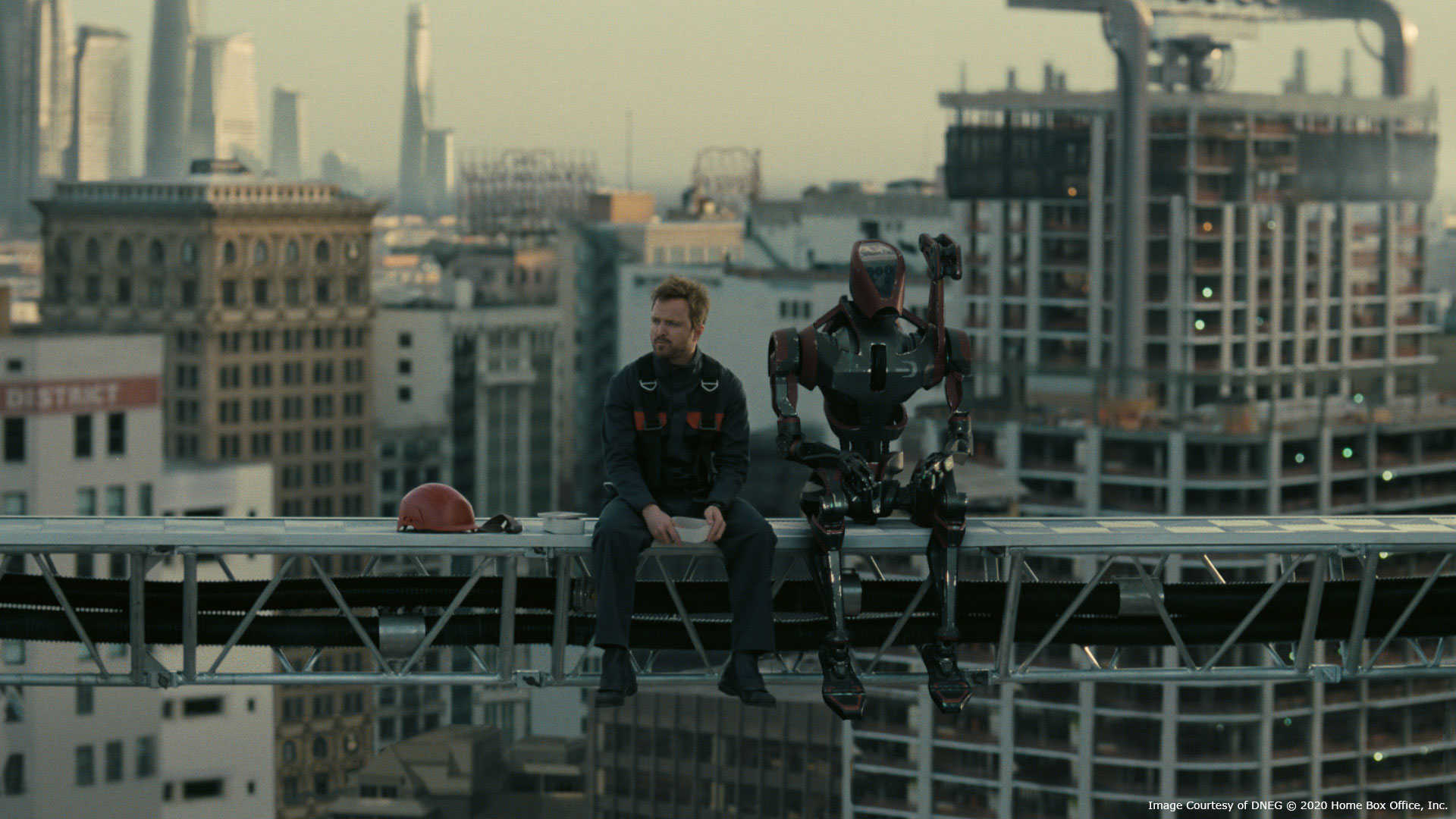

George, the construction robot, was by far the most straight forward. Because he’s such a utilitarian robot, the design needed to evoke that and seemed to fall together pretty well. Harriet, the laboratory robot, had more adjustments throughout her design. We ended up redesigning her hands to make them work for the story better as well as going through a full range of materials for the body panels. Jonah didn’t want any of these robots to evoke a sense of other robots seen in cinema. That mostly related to the look of the materials on Harriet and the way the mech self-assembled. Our build team sent many combinations of materials to production before settling in on a white, rubberized material. However, even as those shots progressed, we continued altering those materials until the end – tweaking the dirt levels until it felt just right.

Can you tell us more about their rigging and animations?

For the most part, rigging on the humanoid bots was fairly straightforward. We kept them very similar to our humanoid rig template so our animators could jump right in. Additionally, George and Harriet could accept motion capture data when needed. By far the biggest rigging challenge was the mech assembly process. We did our best to fit everything into the boxes with minimal collisions, but the animation of the process required a bespoke rig, built out specifically for that shot. As direction on that shot changed, the rig would also need adjustment. In DNEG’s typical feature pipeline these changes would need to be sent back pretty far in order to ripple back forward, however the TV VFX team has a few shortcuts in order to stay nimble in the television timelines.

Production fitted a motion capture artist with a magnetic capture suit on location as stand-ins for George and Harriet. Initially, the idea was to apply the motion capture data to our robots and kick that out as version 1. However, as the shots came in, sometimes the direction we received and the action we saw weren’t the same. Additionally the robots were estimated to weigh somewhere between 400 and 600 pounds – so the 150 pound actors were considerably more light on their feet than we needed. Ben and his team did an amazing job keyframe animating all of the robots. The motion capture performers provided a lot of reference and were incredibly useful for direction, however needed more heft and more raw power behind much of the motions. The Vancouver team did perform a few specific actions on their on motion capture stage to really nail a few tricky motions – mostly, though, everything was crafted through keyframe animation.

Did you receive specific indications and references for their animations?

When it was safe to do so, the stand-in performers provided a lot of reference for us. As the sequences came together we also received more direction as to each of the characters’ motivation. George was the least humanistic in his intention, however we retained quite a bit of micro-movement and balance actions to keep him feeling grounded. Harriet starts out much more stiff, but once her evade/escape protocols are activated she is far more emotive. We can sense her panic and determination through her actions. For the mech, however, it was so large that all of the actors pantomimed as well as they could in reaction to an imaginary 14 foot tall robot. The direction we received for the mech’s animation was “Graceful, but unstoppable. Like a gorilla.” Our team took that to heart and you can really see that play out in some of the more dramatic action that the mech performs.

How did you create the various shaders and textures?

Much of the texturing was done by hand in Mari for the robots and vehicles. We also used Substance Designer when we could, including for many of the props. All of that work was pulled into DNEG’s lookdev and rendering tool of choice, Clarisse, where they were tuned and optimized so that we could iterate faster. We also generated a set of textures which were friendly to the Maya viewport which enabled us to turn around high quality playblasts which we could use for slap comps and early rounds of anim notes.

How was their presence simulated on-set for interactions and framing?

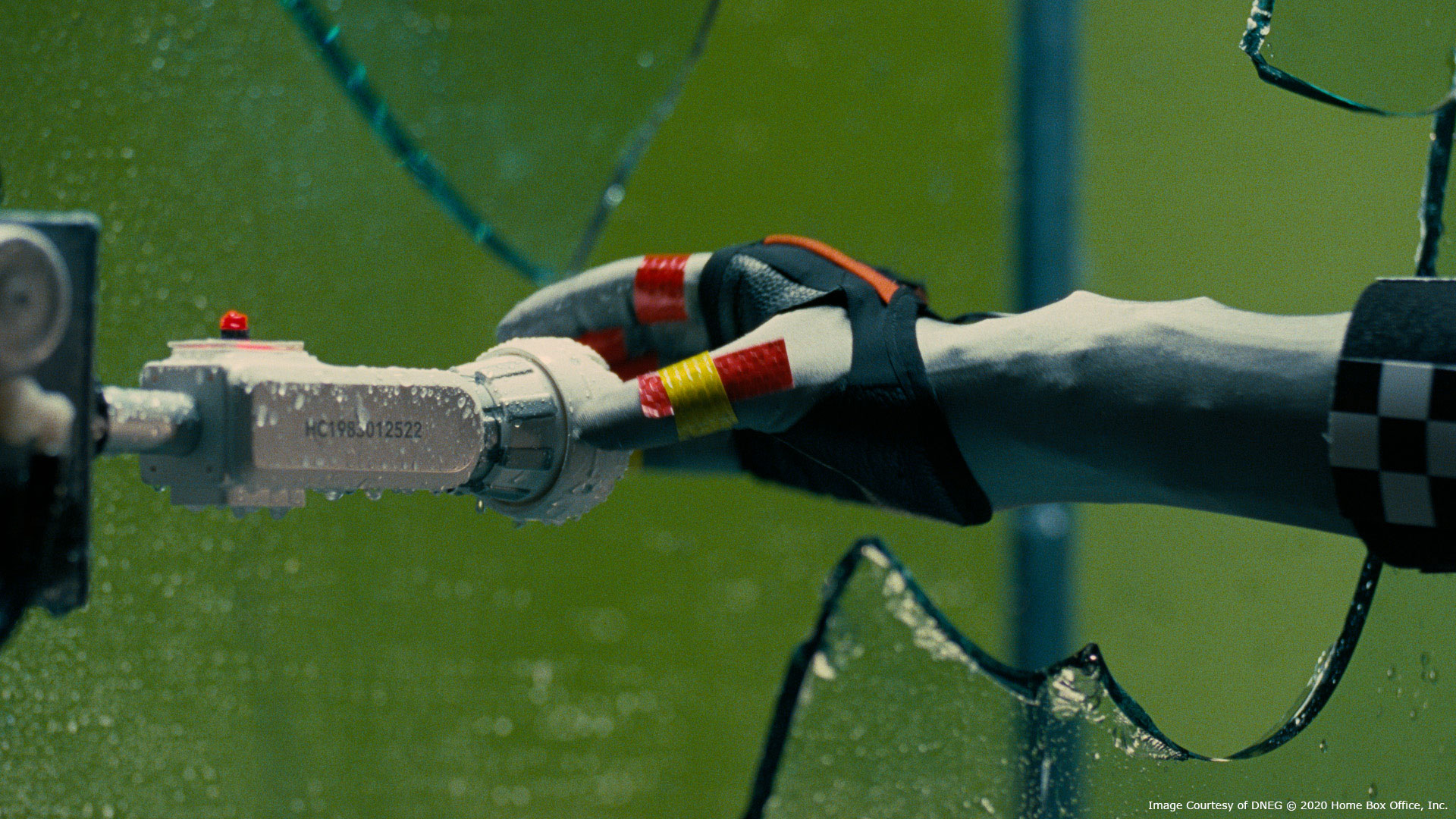

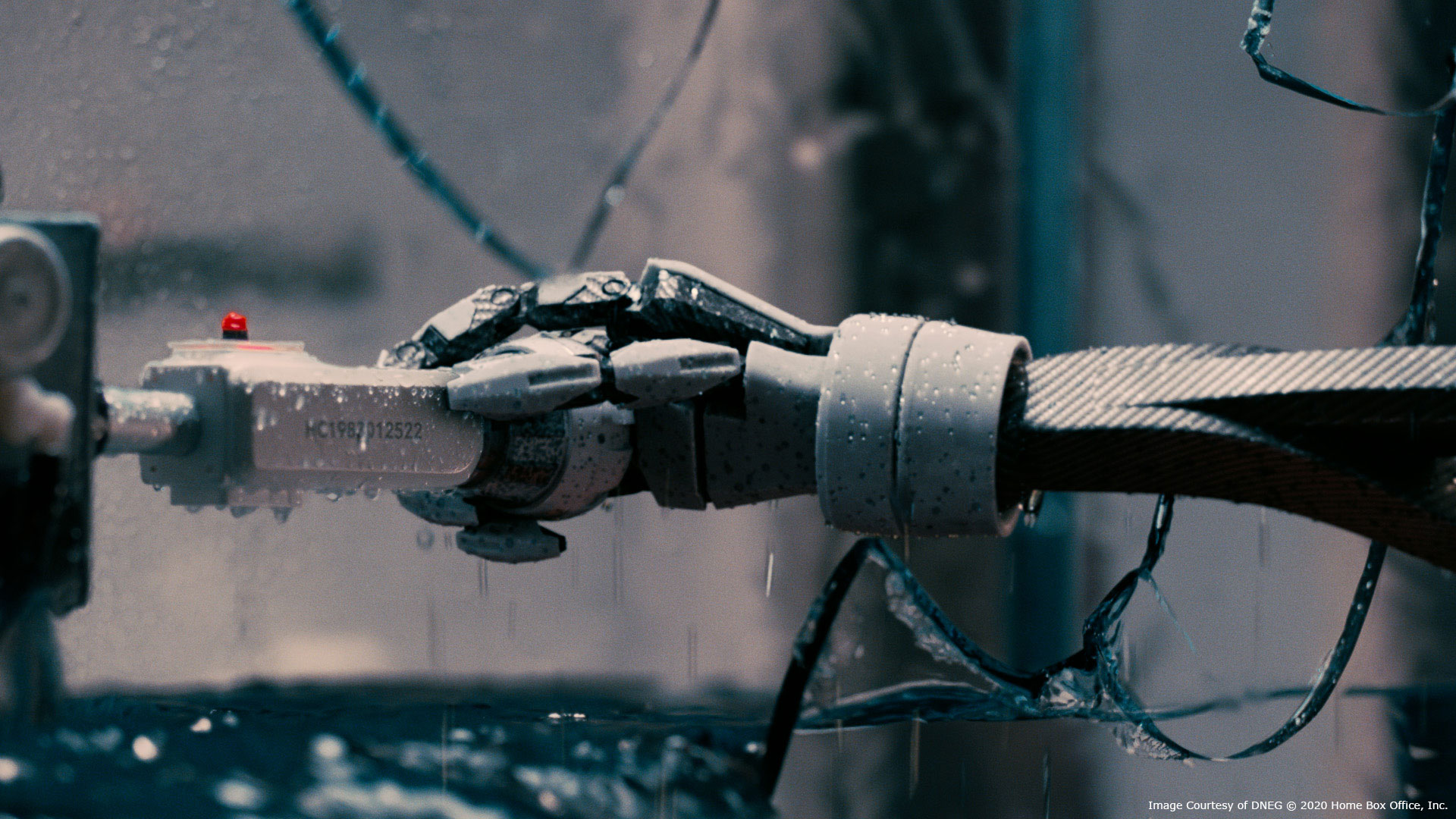

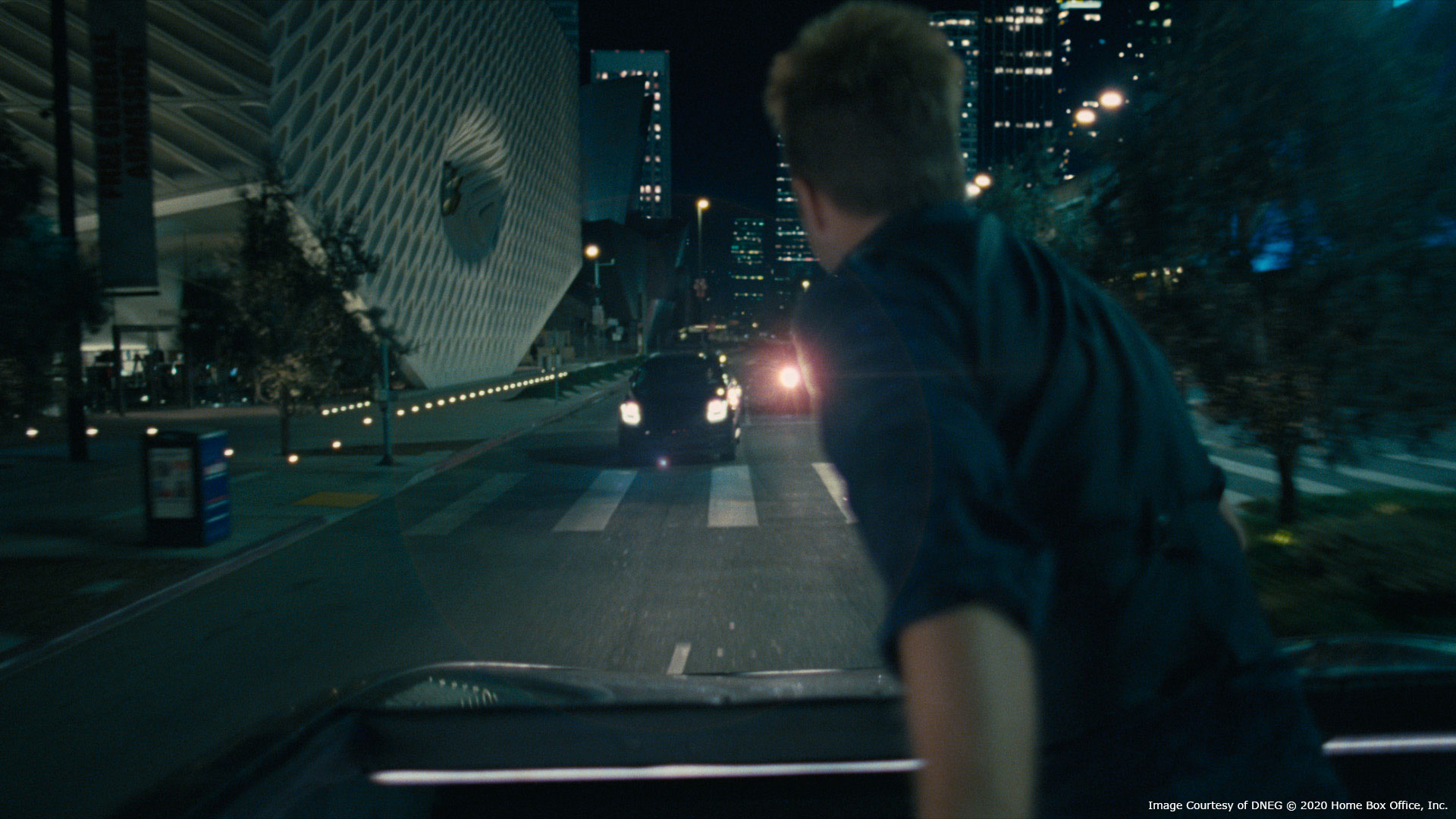

In addition to the performers mentioned above, there were a few instances where a frame was built to simulate a couple mech panels that the actors could interact with. There’s one moment when Harriet punches through glass and they had built a hydraulic ram roughly in the shape of her hand that allowed them to get that glass smash in-camera. Also, for the car chase, they had constructed a stunt car with the same wheelbase as the rideshare vehicle. Once they needed more maneuverability they switched over to that car, which was essentially an engine and a roll cage on wheels. We then went through replacing it with a digital version of the rideshare vehicle, being careful to retain the practical wheels and tires as much as possible.

Which robot was the most complicated to create and why?

The mech probably required the most back-and-forth. Its massive, boxy frame created some complications with range of motion which had to be worked out, often by creating more complex mechanical linkages which offset each rotation axis into places where they could fit. Also, the mech’s assembly sequence took time, not only to figure out exactly what it would be doing, but a bespoke rigging solution had to be implemented for those shots just to execute it.

Can you elaborate on the design and the creation of the Future Private Jet sequences?

We only had a couple of shots with the private jet and they were all long distance exterior shots of it on the ground and one in flight.

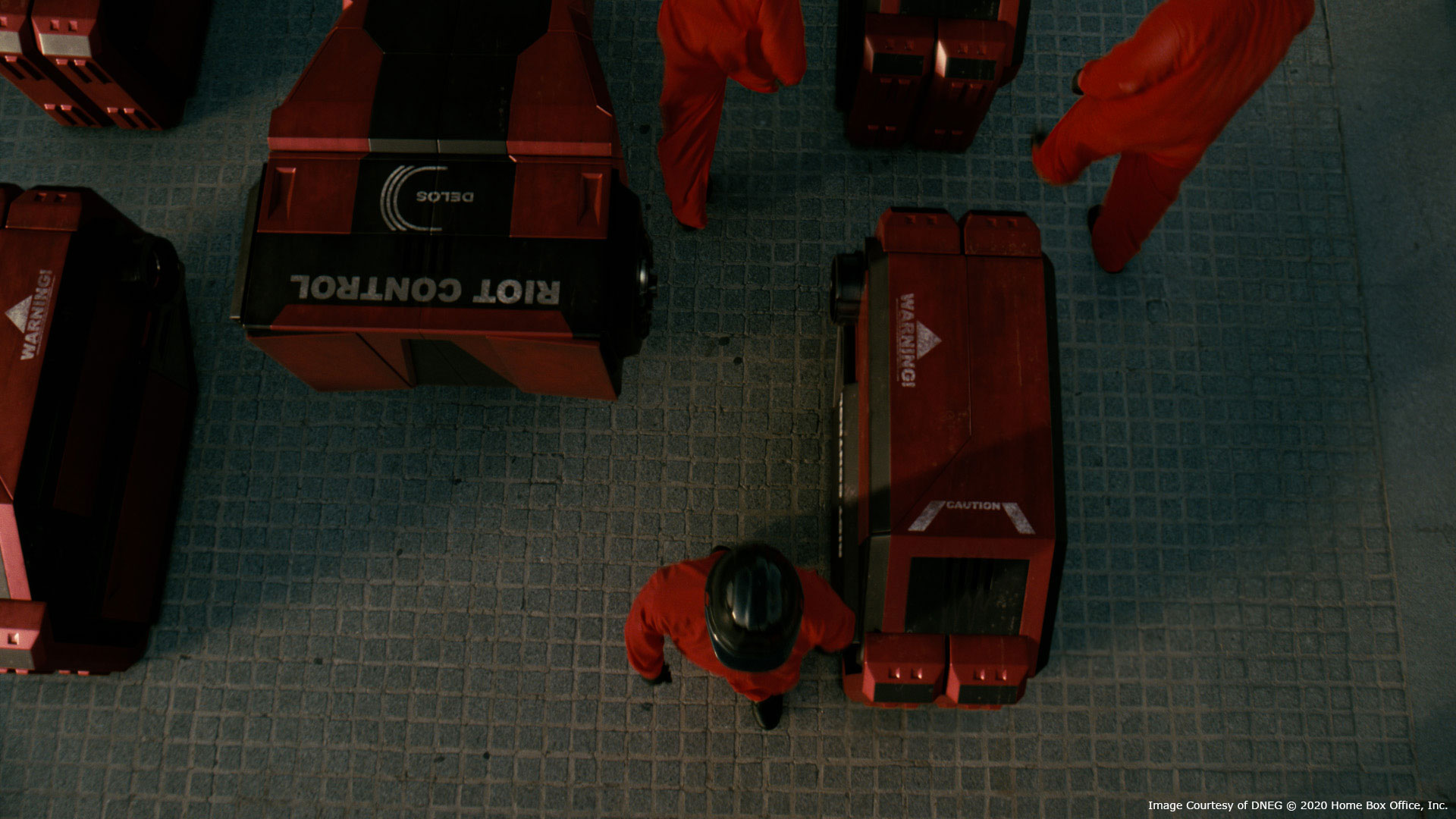

How did you create the various vehicles?

Both the rideshare vehicle (in the car chase) and the black Rezvani were built by production for in-camera work. They had done both LiDAR scans and extensive photography of each. We brought those scans in as reference and our build team re-constructed the vehicles to match. Once they were re-built, they each got rigged and carefully textured to match as closely as possible. It was critical that both of those cars were detailed up with a close eye on matching as we intercut between the in-camera and digital versions during the sequences. We also had photography of the stunt vehicles they used which enabled us to ensure our matchmoves were as tight as possible. The stunt vehicles’ wheels and tires matched the practical cars, so we wanted to keep as much of those as possible while replacing the top body shell. For the most part, were were able to do so which helped with that critical ground contact point quite a bit.

Can you tell us more about the FX work such as explosions and water?

The FX work during this season had quite a range, and once again if there was something shot on location, we would try our best to retain as much of it as possible. When Harriet punches through the glass to steal the HCU, they had a ram actually punch through a glass water tank. We painted out the ram and kept much of the glass and water and our FX team created a sim that moved and looked so much like the water in the plate it’s difficult to find the blend. We ended up giving it a bit more energy and because we were putting Harriet’s hand and arm back in there we needed it to flow over. When it came to Harriet getting shot at through the escape sequence, we had debated the possibility of modeling multiple stages of the destruction into the asset and progressing through those during the sequence. However, as we began working on the sequence it became clear that we would need to be flexible with the level of destruction we’d be seeing on the robot throughout the shots. So our FX artists developed a system of tracking bullet hits and the damage they do across the entire sequence. This setup chipped away at the rubber exterior, revealing additional parts beneath and then breaking those away with additional hits. This gave us a lot of flexibility of not only how much damage there is, but where it is happening. Not being locked into a set of prebuilt variants was a relief as the shots evolved and we were able to accommodate clients’ requests.

We had two large explosion sequences in this season. One occurred during the car chase where Caleb launches a drone grenade into an SUV. Our FX team hit the look of the fire and smoke pretty early on, but it was the dynamics of the car itself that we had the most back-and-forth on. The asset wasn’t built to be pushed through the FX pipeline, we had initially thought the car would be staying in one piece and flipping over. It became clear, however, that the shot would be much better if the car heaved up and sheared in half. It took a few iterations but the team did a great job, in tandem with the animators, of getting that just right. The animators got the initial timing of the car lifting up and FX took over to have it pull apart and tumble down the road.

The other explosion was much more of a challenge. While Hale is driving away in her futuristic Rezvani, the car blows up in a 3 shot sequence, sailing past the camera. Production shot a background plate, the Rezvani driving by, and a stunt car getting blown up in a parking lot from similar angles. The ask was to merge all of the plates, retain all of the fires, wheels, and tires, and replace the body of the car digitally and augment with FX fire. As much as this was a massive compositing undertaking, the FX fire had to sit right next to practical fire, matching its motion and look. Once our artists got the look of the fire, we realized that was the easier part. The distance the car travels made the simulation volume fairly large, the proximity to the camera meant the detail had to be small, and the rotation and motion of the car needed a lot of substeps to remain stable. With all of that we were still able to iterate and our team got it into a great place where it all sits together really well.

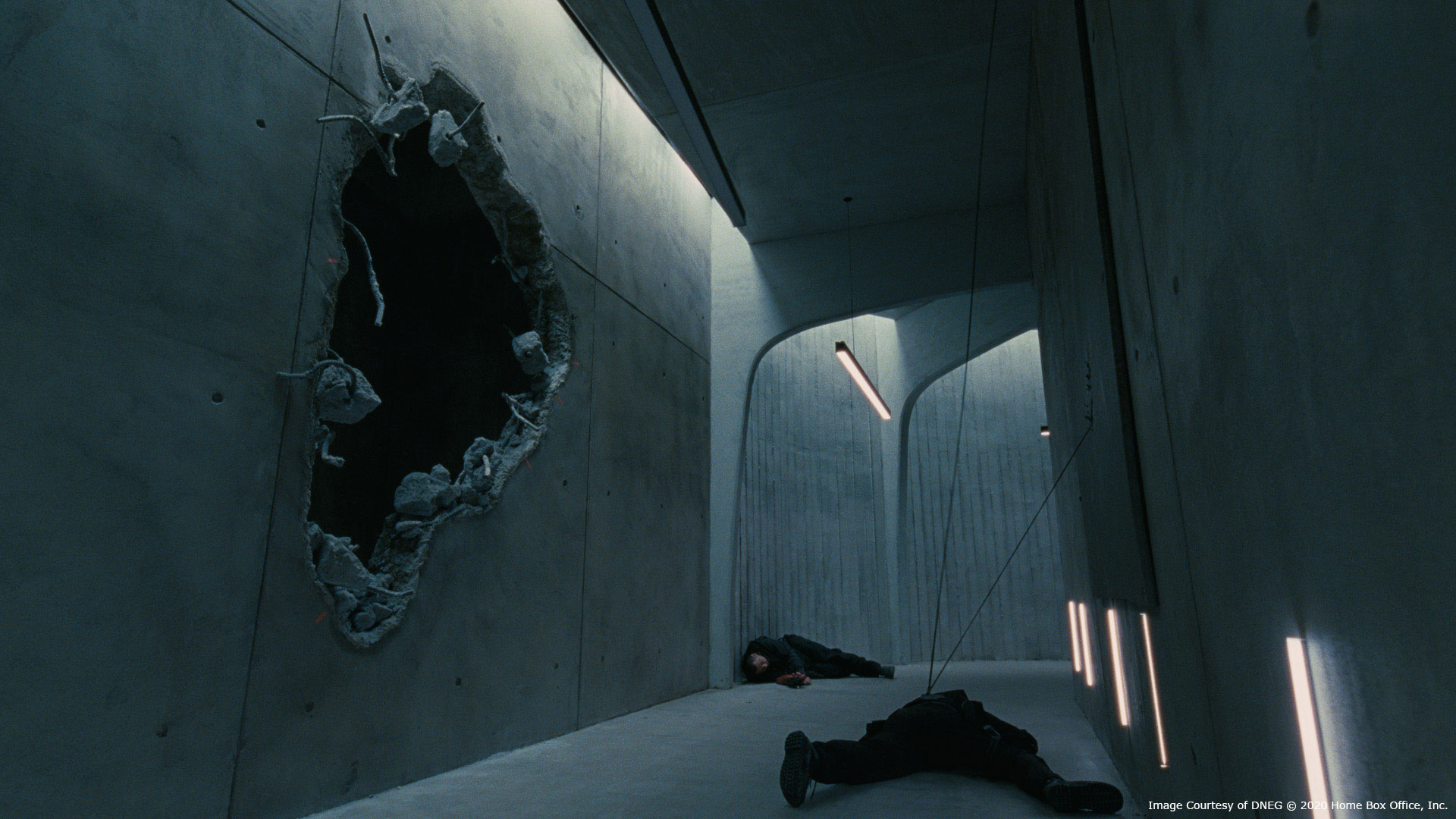

I’d be remiss if I didn’t mention all the work put into the mech smashing through the walls in the hallway as well. Production created a hallway with a hole in the wall and we created all of the falling, crumbling concrete with all the interaction on the mech. That sequence turned out to be more challenging than we had initially thought. Once again just the detail of the concrete pieces created enough instability in the system that we spent a long time just tracking down all the pieces that may get stuck and vibrate in place as the mech is just stepping on and through all of that debris. Every time you start to look for ways to short-cut or ease the simulation in some way, you could just see that loss of sim detail so those shots came down to just a lot of hard work.

Which sequence or shot was the most challenging?

I think everyone involved with the flipping Rezvani explosion would agree that those shots gave us the most to worry about. We knew from the beginning that it was going to take a lot of work to get all of those plates to work together while holding on to everything that production got in-camera. It seems like a straightforward set of shots, but so many pieces of those moved through so many different disciplines to get it there. It was one of those sequences where you work it over time and time again, then you have to back off a bit, strip it all down and work it back up to really find that magic sauce.

Is there anything specific that gave you some really short nights?

The Rezvani explosion and Mech rampage sequence were the things that gave us some shorter nights at first, but as the COVID precautions ramped up and we had to transition to work-from-home, that was just a different beast. The tech and pipeline teams did a phenomenal job of getting everyone up to speed and it was impressive how productive the entire team was within just a few days.

What is your favorite shot or sequence?

I think the Harriet escape sequence has a lot of great work in it. The robot looks great throughout and I really appreciate all the work that went into getting it there.

What is your best memory on this show?

By far my best memory on the show is the team I got to work with. The whole DNEG LA crew was a fantastic group of very talented artists and producers who worked exceptionally well together and helped maintain a great culture. I also can’t say enough about the supervisors and artists we worked with in the Vancouver and India offices – it was a great experience.

What’s the VFX shot count?

We had around 200 shots throughout the season.

What was the size of your team?

The majority of work came through the LA office with our team of 25-30 (compositing, lighting and FX) with most of the animation being handled by our team in Vancouver. A team in the Chennai office handled much of the car chase and some of the George shots. World-wide we had nearly 400 artists work on it once you roll in all of our build, roto, and prep.

What are the four movies that gave you the passion for cinema?

Both JURASSIC PARK and TOY STORY showed me the blend of technology and story telling that would lead me to my career. I’ve always enjoyed the ridiculousness of SINGIN’ IN THE RAIN and think it’s a good reminder of what you can get away with. And surely it’s a little cliche’ for a VFX artist to say, but I can watch 2001: A SPACE ODYSSEY at any time; I love the look, the pacing, the precision and the sound mix.

A big thanks for your time.

WESTWORLD SEASON 3 – VFX BREAKDOWN – DNEG

WANT TO KNOW MORE?

DNEG: Dedicated page about WESTWORLD – Season 3 on DNEG website.

CREDITS: Westworld / HBO

© Vincent Frei – The Art of VFX – 2020