Erik Winquist has nearly 20 years of experience in visual effects and has worked at Weta Digital for 15 years. He has participated in many films such as THE LORD OF THE RINGS: THE RETURN OF THE KING, KING KONG, AVATAR, THE HOBBIT: AN UNEXPECTED JOURNEY and of course the APES trilogy.

What was your feeling to be in the APES universe?

This was my third visit to Caesar’s world, having been a VFX Supervisor at Weta on both RISE and DAWN. The PLANET OF THE APES franchise has a long, cherished history, but this new trilogy in particular has struck a chord with millions of moviegoers and it’s been pretty wonderful to have been a part of bringing these films to the screen. A few years back, I discovered that the special effects make-up legend John Chambers, who pioneered the ape prosthetics for the original films of the ’60s and ’70s, was my Dad’s stepmother’s brother’s brother-in-law (try and plot that family tree!) I never met him, but still, it’s a pretty neat thread between the SFX/VFX of the past and present films.

How did you organize the work at Weta with VFX Supervisor Dan Lemmon and your team?

Internally, we split the show into four teams to tackle the mountain of work we had to get through. Anders Langlands supervised the opening forest battle and hidden fortress sequences with the waterfall. Luke Millar’s team took on all of the travelling sequences covering the journey of Caesar and company from the coast through the mountains, their meeting Bad Ape and their eventual arrival above the prison camp, as well as the final sequence by the lake. Mark Gee supervised the first half of the prison camp sequences, with the apes building the wall, the discovery of the tunnels, the powerful « apes together strong » scene in the rain, and Caesar’s meetings with the Colonel. I was responsible for the rest; the apes planning their escape, Caesar’s final confrontation with the Colonel and the eponymous war between the human armies. And the avalanche. All of this was orchestrated as before, under the overall supervision of Dan Lemmon, who was there with from the outset with Matt Reeves for the whole snowy, rainy, miserable shoot! While each of our teams were operating as independent units, we’d have daily catch-ups with Dan to talk about in-progress shots, discuss Matt’s latest feedback and stay abreast of updates to character assets or environments that were happening.

How did you enhanced the assets for Caesar, Maurice, Luca and Rocket?

In simple terms, everyone’s gotten a little older and a little wiser. As Caesar’s story has progressed from Rise through to War, we’ve subtly aged everyone a bit, improved their hair grooms and the appearance of the skin. But under the surface, a lot has evolved over the years in the pipeline. Our animators now have much more responsive puppets from our Koru system which allow them to review and scrub their scenes containing many apes with a high degree of fidelity. This is the first of the PLANET OF THE APES films to be rendered entirely with Manuka, our physically-based renderer. The end result of that transition is just superb realism in the way the characters are lit. Everything just looks so tactile and integrated into Michael Seresin’s breathtaking cinematography. Everything in the pipeline is about taking the best aspects from the previous films and taking the next leap.

Bad Ape is a new character. Can you explain in detail about his creation?

Bad Ape was a great deal of fun to work on, thanks in large part to where Steve Zahn took that character. There is an instantly believable and endearing quality about him, which stems from his being « cast » from reference photography of a real chimpanzee instead of being « designed » as a character. Once again, the filmmakers worked with the Aaron Sims Company on concepts for who this goofy, old little hermit wanted to be and the oversized parka, snow boots and woolly hat instantly tell you this isn’t like any of the apes you’ve met previously. Bad Ape has big, expressive eyes which take great advantage of our latest eye model and his wardrobe presented many challenges due to the wool knit upper portions of his ski vest and beanie and the furry lining to his jacket.

As Bad Ape provides some levity in a relatively grim story, he has a number of great character moments in the film. One in particular features dialog spoken while chomping on a cracker, which required FX simulations for the crumbs flying out of his mouth. It’s a subtle touch, but really helps to elevate the scene. He is later shown getting covered in falling dirt, dropping into puddles and being blasted with snow, each of which posed unique simulation challenges for what ultimately are brief gags that are easily taken for granted in live action filmmaking.

The fur and groom are really impressive. How did you handle this aspect?

The foundation starts with our in-house hair creation and grooming tool called Wig. This allows our modellers to intuitively grow and sculpt a groom tailored to each individual character. Using this, they’re able to control the placement and geometric properties of all of the ape’s hair, from the finest vellus hair (« peach fuzz ») and eyelashes of Bad Ape to the long dread-locky shag of Maurice. The tools allow us to dial in just the right amount of clumping from region to region, which is an important part of the groom and also an important driver of the wet look of fur. And there was plenty of mist and rain to contend with in this film.

Another proprietary plug-in called Figaro handles the fur simulation side of things for our creature department, to add dynamics from the ape’s movement or other forces like wind. This can also be coupled with FX simulations for subtle impacts of raindrops falling, dirt cascading down or a bucket of freezing water being poured over Caesar.

As part of the groom, we also add in the appropriate amount of characteristic debris in the hair. Depending on the situation from scene to scene, this would include things like little bits of leaves and grass, or fine water droplets for rainy or waterfall-laden scenes. In War, it also was used for accumulated snow in the fur when the apes had been outside in the many snowy scenes in the film. That static snow debris was also occasionally augmented with FX-driven snow build-up when the apes were rolling around on the ground.

On the rendering side of things, we’ve spent ample R&D effort over the years improving our fibre shading. This has resulted in a physically-based model in which the pigment is adjusted by varying virtual amounts of eumelanin and pheomelanin as happens in reality. There’s far more to it than that, of course, but that work is also improved upon by a number of efficiencies, which allow us to pack more rendering bang for our buck in our overnight jobs.

Post-render, our compositors have a very mature in-house toolset for applying lens effects such as depth-of-field to renders, and have become very adept at working with furry creatures. That toolset came in very handy for the incredibly shallow depth-of-field we were working with on this show, due to it being shot on the large format Alexa65 camera system.

Can you explain in details about the eyes of Caesar?

Even more so than hair, producing unquestionably realistic eyes has been a major focus for us for years, given the sort of creature and character-driven work we are known for. Those are the one thing that you really have to get right for an audience to fully be swept away by a digital character. We’ve poured over medical research on many different aspects of how human and animal eyes function and have an eye model which very closely replicates the structure of the real thing. Getting the geometry correct is critical, as so much of how we perceive the eyeball is tied to the refraction and scattering of light through the cornea and around the stroma of the iris, how caustics are focused as the angle of incoming light changes and even how that light reflects back off the retina. A lot of attention has been paid to the surface of the cornea, its level of wetness and reflectivity and how the thin film of wetness gathers along the edge of the eyelids. We’ve studied how the fibres of the iris contract and expand to dilate the pupil, and how tears well up and pour over the eyelid. (That last bit has always been important when Andy Serkis is inhabiting your character.)

But the other crucially important thing that sells the illusion is how those eyes move; how they dart as they change their gaze, the little micro adjustments as the character is following something, and also how the tissue around the eyeball follows along as the muscles drive the eyes. Their animation is the most important part of the whole recipe. We as audience members and humans are incredibly adept at recognising when the motion of a character’s eyes isn’t natural.

How did you handle the rigging and animation of the main apes?

The apes are built to mimic the anatomy of real chimps, gorillas and orangutans in that they have a skeletal system wrapped in dynamic muscle shapes with an outer skin which rides atop the muscles. Those muscles are contracted or relaxed based on movement of the joints and they can also be isometrically triggered by the animators if needed.

On a project like the PLANET OF THE APES films, the performance of the characters is typically driven by the actors on set, and that raw motion data is first processed by our motion edit department. The actor’s facial camera feed is also processed and run through our facial solver tools, which analyse the marker dots on their face and correlate those movements to the underlying muscle groups which drive expression changes on the face.

But that’s often just the start of the work. The next step is for animators and facial animators to take that motion and see how it emotionally registers to the actor’s original performance. Apes may share nearly all of our DNA, but they definitely have some significant anatomical differences which make a 1:1 mapping of the actor to the ape impossible. There may be moments when actors don’t quite pull off a movement entirely natural for an ape. The facial performance may not come across with the same emotional impact as what the actor conveyed. These are things that the animation team needs to evaluate and adjust, until the actor and director’s intent is imbued into the digital character. This is a time-intensive process to get right and in the case of War, was an aspect of our reviews with Matt which we devoted a lot of time to.

Can you tell us more about the lighting challenge and the use of Manuka?

Working on all of these films has been wonderful, but War, in particular was quite a treat. The film is filled with long, lingering close-ups of our characters that really allow the audience to get lost in their eyes. It afforded us the opportunity to work in a myriad of different lighting environments, both practical and virtual and so often played with atmospherics and natural phenomena like rain and snow. Certainly, those things I just mentioned that make for fascinating shots to watch as an audience member can be mildly to completely terrifying for the visual effects team whose work has to hold up to that kind of scrutiny.

As I mentioned earlier, unlike the previous two instalments which were entirely or mostly rendered with RenderMan, War was entirely rendered using our in-house path tracer, Manuka. The simulated light transport—effectively bouncing photons around the scene until they wind up in the camera—gives us amazingly life-like images with much of the nuance of Michael Seresin’s live action footage. Coupled with all of the other advancements at the studio that have come before this project, it’s allowed us to create some truly compelling images and memorable characters.

Besides very physically-accurate images, another of Manuka’s strengths is its ability to handle very large scenes with aplomb. This film has many scenes which feature large crowds of furry apes, and where we previously would have had to resort to low-resolution assets with fake fur or severely decimated grooms, we can now use our high-res assets with dozens, if not hundreds, in a render pass and get a much better result for it.

Can you tell us more about the crowd creation and animation?

As with DAWN OF THE PLANET OF THE APES, this project had many shots which featured huge crowds of apes. Even being able to use our Massive software to fill in background ranks with generic library motion, we still had to populate shots with dozens of hand-placed captured ape performances, as Matt often had specific things he wanted them to be doing, or rather, not be doing.

Like we’d done on the previous two instalments, during principal photography we set aside a few days in the mocap volume for capturing generic background performances and vignettes with the ape performers. These crowd performances, sometimes captured against specific terrain for use in a single scene, were augmented with existing captured library motion from previous films, or were captured back in Wellington with our local mocap performers.

The task of filling out empty plates with an entire ape community typically fell to our motion editors, who took quite a few of those heavy crowd shots through to final animation.

Massive was also used for generating the advancing Northern Army soldiers during the film’s climax.

The little girl Nova has a lot of interactions with Maurice. How did you manage this aspect?

With a lot of sweat and tears! Those are definitely daunting shots. One thing we had on our side was an amazing, generous performer like Karin Konoval playing the part of Maurice. She was physically there in the takes, often with padding strapped to her to fill out her frame to more the bulk of Maurice. Amiah Miller was there for Karin to hold on to and play off of, which meant all of the important stuff was there for us. What was left was a lot of creative shot execution, figuring out where we could play Nova behind the edge of Maurice, or where we might be able to bury a hand in his hair. On a few occasions, we needed to give Nova some new limbs to make the contact work or be less work for roto and paint. Luckily, we had a pretty great digital double to use for her.

Can you explain in detail about your new in-house tool Totara?

Totara came about from the desire to automate the creation of natural-looking, believable landscapes for digital environment work. It’s a toolset, still in the early stages of its life, which allows us to virtually ‘seed’ terrain based on rules and let those seeds ‘grow’, mimicking nature in the way that the various species compete for sunlight, grow certain ways on certain kinds of terrain, and so on. What we get from this can be seen surrounding the Colonel’s prison camp environment in the foothills of the Eastern Sierra Mountains. The pine trees have a remarkably life-like distribution to them. There are sections of trees where one side is mostly dead limbs without needles because it’s up against neighbouring trees. Closer to the ground, there are smaller shrubs scattered around. The tool is still in its early stages and is being continually improved upon, but it has already paid great dividends visually.

How did you created the Colonel base and its environment?

The prison camp environment was a long journey from start to finish. The first step was to finalise the design. The Production Designer, James Chinlund, came up with a really cool place for the film’s third act to take place in, but by the time we started in on post production, there were a few finer points within the camp that Matt still wanted to explore around. We spent a few weeks iterating on some concepts and going through those with Matt and James and then set to work building a fully-CG camp environment surrounded by a massive, snowy tree-covered mountain range. The digital camp was used for wide shots from up above in the hills where the apes first discover the location, and the live action set, which was built in Vancouver, represented the first storey of most of the « prison yard » areas of the camp which was extended using the CG environment. Looking east from the camp, a vast plain sloped gently downhill towards the Alabama hills and a distant mountain range. The CG camp environment stretched outwards a couple hundred metres or so and then handed off to a matte painting beyond.

The third act features a lot of action and destructions. Can you tell us more about the creation of these FX elements?

No war is complete without lots of explosions, and Johnathan Nixon and his team in our FX department created a broad armoury for us to populate shots with. What you see in the battle shots at the end is typically a mixture of generic smoke plume elements which were simulated using our Synapse fluid solver, library sim elements and practical filmed elements, both from this production and our existing library. In addition to battlefield dressing, the FX team also handled specific beats such as Apache helicopters being shot down and crashing violently into the snow, incoming volleys from the Northern Army exploding inside the camp and an enormous display of pyrotechnics as Caesar blows up a fuel tanker and sets off a chain reaction in the weapons depot. Pretty much all of the fluid sims (smoke, fire, liquid) in the sequence were handled by Synapse. We utilised Houdini’s dynamics for a lot of the destruction caused by all of the violence, such as the exploding of the ape wall and concrete structures along the sides of the camp.

The enormous explosion which ends the battle was in development for many weeks. We started by looking at a number of reference clips with Matt, who picked the biggest of them all. That reference clip, which I believe was of a weapons depot being destroyed, had a very unique look to it. Besides being one of the most gargantuan conventional explosions I’ve ever seen footage of, it also had a very interesting shower of sparks and debris which almost looked like smeared orange clouds. That aspect of the reference inspired the falling embers following the explosion. Ayako Kuroda set up the simulation for that explosion and we iterated quite a few times, getting bigger each time. The final simulation was running on the render wall for several days.

We also took inspiration from the visible shockwave that is often seen expanding rapidly outwards from high explosive events. Both a faint vapour bubble and the evidence of that expansion from snow getting blasted of the surrounding trees were put into the shot to amp up the magnitude of the spectacle.

A massive avalanche arrives at the end. Can you explain in detail about its creation and animation?

The avalanche scene was also in development for months with our FX and animation teams. In basic terms, we concocted a system where a curve could be animated to art direct the path of the leading edge of the avalanche down the hill. From that leading edge, we drove fluid sims for the heavy snow volumes billowing up, and then added a particle component which had instanced chunks of snow and was advected by the volume. Moments later, when Caesar is nearly swallowed up by the oncoming avalanche, several simulation passes were run to produce the wall of snow: the rushing main body of the avalanche tearing through the camp in the background, several volume simulations, position based dynamics passes for more solid or slushy snow surging underneath the atomised clouds, particle sims of snow flurries, snow clinging to trees being pulled down the slope, ground interaction and footfalls and many practical smoke and dust elements which were layered into the mix in the comp.

The final shot of the scene, once the avalanche has come to rest after wiping out all of the human armies, the debris field was hero modelled and textured as a static environment component, with lots of drifting snow elements in the air.

What was the main challenge on this show and how did you achieve it?

At face value, the main challenge was getting through all of the work in the time we had. This was definitely a show that needed to be chopped up into four teams to divide and conquer.

But beyond the logistics of that, the other big aspect which had a number of us losing sleep were the heavy FX sequences: the waterfall at the beginning of the film and the avalanche at the end. Those two FX tasks were incredibly daunting and required a very serious focus on the tools and the pipeline early on to ensure we would be in a place to deliver the work at the quality that both Matt and we demanded. They were both problems that resulted in regular focused reviews of our R&D in both of those areas.

What is your favorite shot or sequence and why?

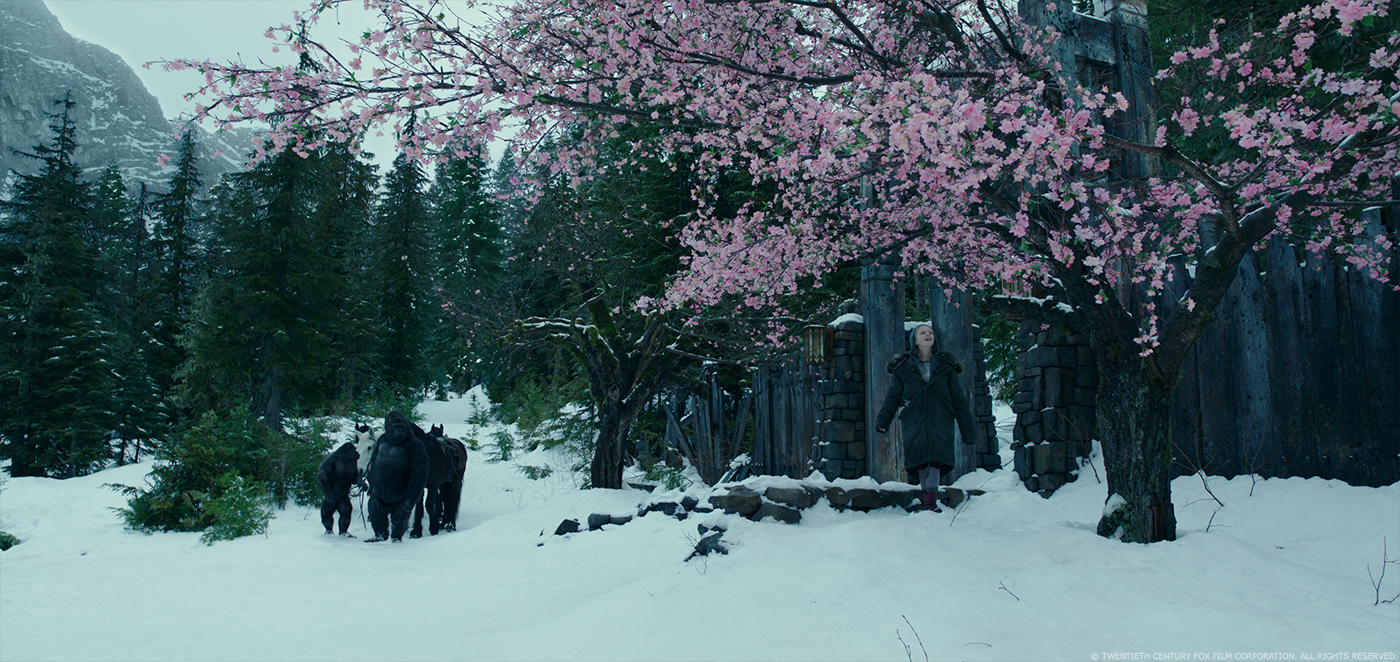

It’s not one of my sequences; this one was Luke’s: the scene in the abandoned ski lodge where Caesar and company first meet Bad Ape is an amazing scene. Just such memorable performances, and it looks spectacular. That I didn’t work on it meant that I almost got to enjoy it like a regular audience member. That in itself is a rare treat.

What is your best memory on this show?

Dailies. We had fun, and I had a great team.

How long have you worked on this show?

I was personally on board for almost exactly a year. I missed out on covering the live action shoot for this one due to scheduling conflicts and joined the team about the same time that Matt had gotten his first assembly cut of the film together for us to screen.

What is your VFX shots count?

Our final tally was just over 1,400 shots.

What was the size of your team?

My team consisted of around 40-45 brilliant artists, but all up, over 1,000 people at Weta contributed to the making of WAR FOR THE PLANET OF THE APES!

What is your next project?

I am currently getting deep into post-production here with my team on RAMPAGE, featuring three big critters who like to destroy things and starring Dwayne Johnson. Directed by Brad Peyton.

A big thanks for your time.

// WANT TO KNOW MORE?

Weta Digital: Dedicated page about WAR FOR THE PLANET OF THE APES on Weta Digital website.

© Vincent Frei – The Art of VFX – 2017

That was an amazing read, and I love learning about all the hard work that goes into these amazing movies. And better yet, just watching them is so much fun,too!