In 2017, Joseph Kasparian had explained to us the work of Hybride on ROGUE ONE: A STAR WARS STORY. He then worked on ASSASSIN’S CREED, VALERIAN AND THE CITY OF A THOUSAND PLANETS, MOTHER! and STAR WARS: THE LAST JEDI.

François Lambert began his career in the visual effects in 1994 at Hybride. He joined Industrial Light & Magic in 2003 and then returned to Hybride in 2017. He worked on many films such as STAR WARS: EPISODE III – REVENGE OF THE SITH, PIRATES OF THE CARIBBEAN: DEAD MAN’S CHEST, STAR TREK, PACIFIC RIM and STAR WARS: THE LAST JEDI.

How did you get involved on this show?

Joseph Kasparian: Hybride and ILM have a strategic alliance, which allows us to collaborate with them on some of their most exciting projects. To this day, this is our 15th collaboration with ILM.

François Lambert: And our fourth incursion into the STAR WARS Universe!

How was the collaboration with director Ron Howard and VFX Supervisor Rob Bredow?

Joseph Kasparian: We worked directly with ILM VFX Supervisor Pat Tubach and he would do the reviews with Rob Bredow and Ron Howard. After each director review we would receive notes that we’d discuss with Pat through cineSync whenever anything needed to be clarified.

François Lambert: SOLO was such an enjoyable and smooth ride. I feel very fortunate we could collaborate with such accomplished artists. I feel there was a genuine trust that we could participate in delivering their vision, which meant that we were part of the creative process.

What were their expectations and approaches about the visual effects?

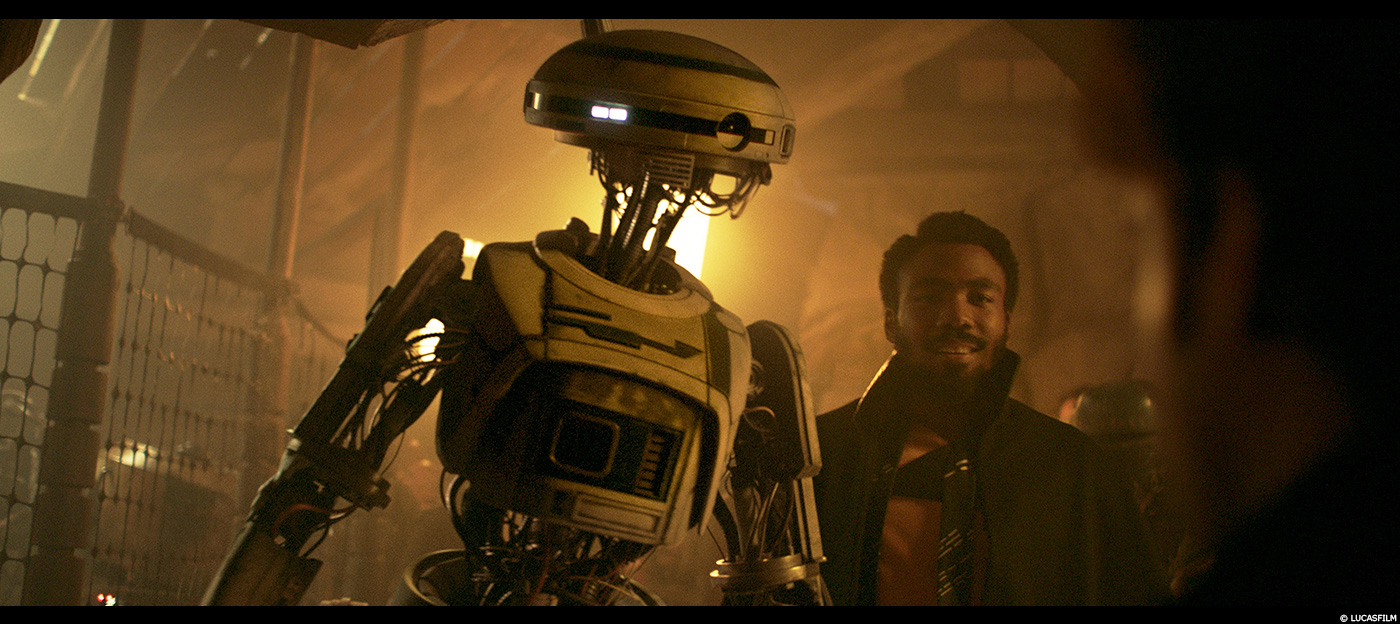

Joseph Kasparian: It was very important for them that we keep as many elements from the live plate as possible. This was the case for all the set extensions as well as for all the work we did on L3-37.

François Lambert: From our original brief with the ILM team, there was a significant interest on behalf of Ron Howard and Rob Bredow in keeping as much as we could from the original plate’s set and characters, in order to preserve the retro feel everyone loved from the original movies. SOLO is the story of a heist, and focuses less on The Force; it’s a little more “realistic” even though it’s still happening in a galaxy far away. That shaped the way we would tackle our VFX load for the project.

How did you organize the work with your VFX Producer?

Joseph Kasparian: We worked closely to determine the level of difficulty and amount of time assigned to each department for each shot and we also did the same thing for all of the assets. We then came up with a schedule with the workload distributed evenly up to the final delivery date and we’d make revisions whenever things changed from the original mandate.

What are the sequences made by Hybride?

Joseph Kasparian: A big part of the work was done on L3-37 but we also created different CG environments for scenes that took place in the mine and Kessel landing pad. We also created impressive 3D CG backgrounds and massive set extensions for the Space Port, where Han Solo gets his name and we also produced set extensions for the Falcon scene that takes place in a junkyard, where Han sees the Millennium Falcon for the first time.

François Lambert: Other VFX work by Hybride includes the Pen Sequence where Han Solo and Chewbacca meet for the first time. The scene involved a few CG characters; a CG chain and some wire removal and although it wasn’t a very technically challenging sequence, it was a fun one to work on because that’s how we finally find out how they meet. Hybride also produced massive sandblasts, FX simulations and explosion enhancements. We were also asked to add drips to Quay’s “nose”. Quay Tolsite is a sketchy character that spits when he talks… so we used the audio track to modulate the snot and drip simulations that follow the conversation so basically no 2 drips are the same!

What was the main challenge with L3-37 and how were the shots filmed with the actress playing this character?

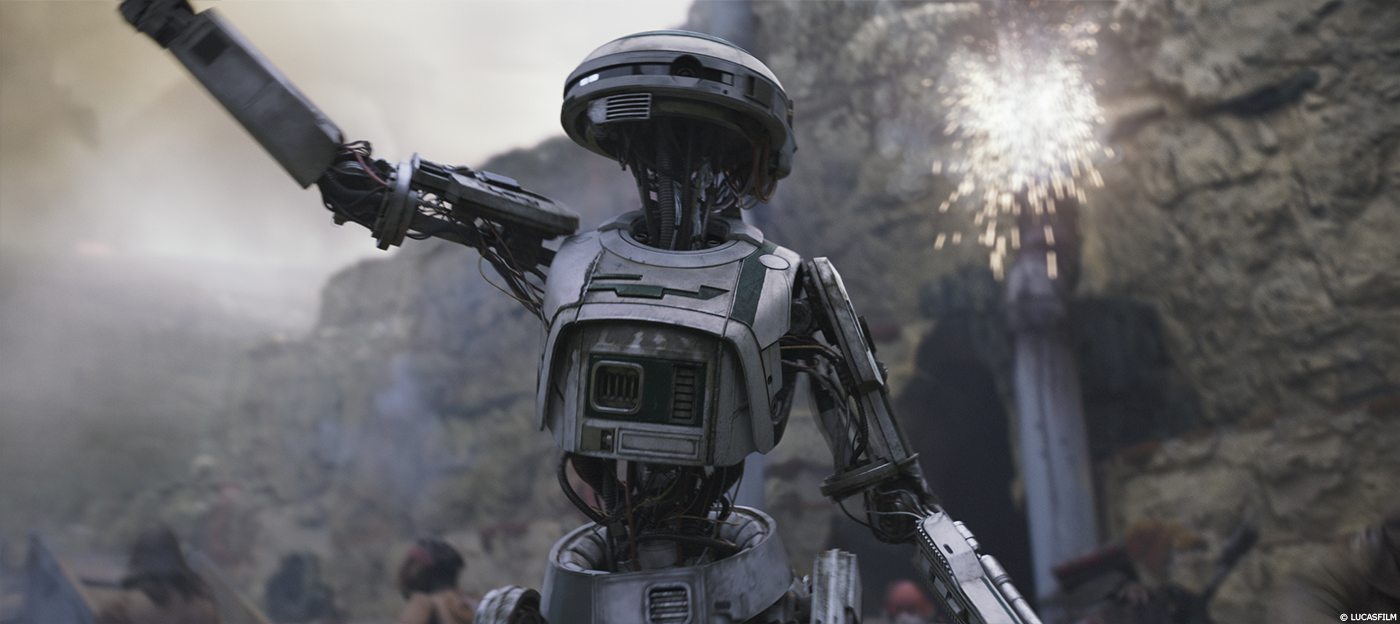

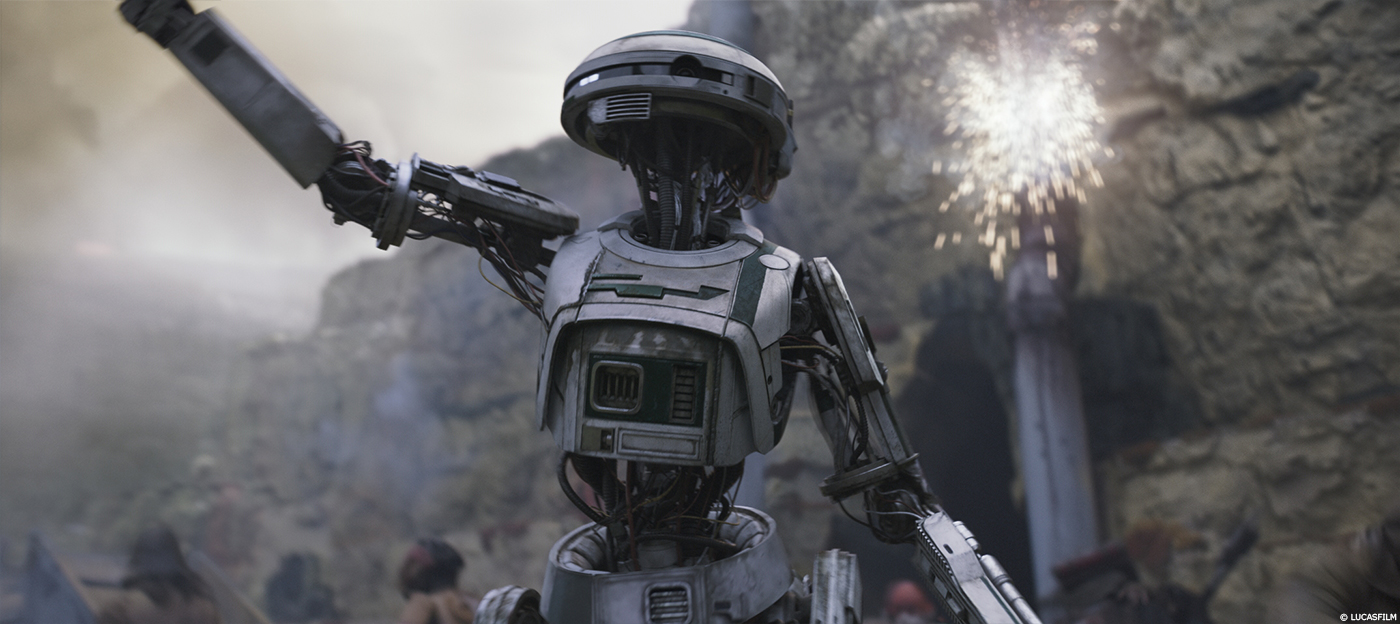

Joseph Kasparian: Contrary to what many believe, the droid’s character wasn’t recorded by a motion capture system, she was played by Phoebe Waller-Bridge wearing a green suit that also included a few droid parts, our main goal was to be able to match move as much as possible so we could retain as much of Phoebe’s performance as we could. We then matched the lighting from the different locations and from there; we kept as much of the live plate whenever possible and seamlessly added L3 3D parts that matched the existing ones when we couldn’t. To transform the actress into a droid, we erased the character inside the suit, replaced it with wires and everything that makes up the robot and reconstructed the backgrounds behind the actress. Some shots have a lot of movement in them—crowds, people cheering behind L3, blaster shots and other ballistic effects—so for each shot, we had to recreate everything that had been hidden by the green portion of the suit, then prepare the plate to receive L3. There were different blends between the practical L3 and the insides for each point of view, so we needed to analyze each shot one by one and adjust accordingly depending on what we could keep from the robot, which also depended on the scene.

Can you explain in detail about the design and the creation of the new droid, L3-37?

Joseph Kasparian: The design and creation of the robot were done at ILM. They created the model with texture and rig since they were defining the look through a few master shots. Once approved, we received the asset (modeling and texture) and turntables so we could transfer between ILM and Hybride pipelines.

How did you handle the rigging and animation?

Joseph Kasparian: First, we asked ILM to send us a range of motion clips to see how their rigs were designed since we couldn’t share them because of our property tools.

Afterwards, we created 2 rigs:

- The first one was used to match move the actor wearing the green suit and to make sure we had control over each piece of robot that moved during the shoot.

- The second rig enabled us to animate the final L3 model with all its wires, tubes and pistons, making sure they weren’t intersecting with each other. So basically, we started shots by match moving the actor in the green suit and then transferred the animation to the final rig. During reviews, one of the main challenges consisted mostly of correcting the slight discrepancies between the live action pieces and the CG L3.

Can you tell us more about the shading and texture work?

Joseph Kasparian: As I mentioned earlier, we have a long-standing relationship with ILM and we now share assets on a daily basis so sharing textures and shading went very well. We had no problem matching everything perfectly even if we were using a different renderer (Arnold VS Render Man). Textures were done on the main asset at ILM and we took charge of all the variations throughout the sequences since the model changed depending on the action.

How did you manage the lighting challenges?

Joseph Kasparian: We wanted to keep as many live pieces of L3 as possible. They had Photo-Real attributes that were extremely valuable (very fine details, specific bumps, detailed reflections) so for each different location we used the Lidar scan, layout geometry and set photography to recreate the on set lighting as accurately as possible. L3 moved a lot so it was very important to have an almost perfect match throughout each shot. This allowed the compositor to seamlessly blend the CG L3 into the live action. Some blending zones or parts were different in each sequence, but very quickly we realized that it was important that we keep the head from the live plate since that’s where the audience is looking.

Can you tell us more about your work during the droid’s rebellion?

Joseph Kasparian: In the robot rebellion sequence in the Spice mine, robots are smashing and breaking everything so small explosions, light flashes and sparks coming in from all directions made integrating the CG L3 into the live action very difficult since we needed to keep as much detail from the real robot parts as possible for the final shot. Background reconstruction became more difficult because we had to reproduce a very complex light setup.

How did you work with Neal Scanlan and his team to enhance their animatronic creatures?

Joseph Kasparian: Different elements and tasks were required depending on the animatronic creature.

For the Mollock hounds we did some paint job to erase their tails, added slime in their mouths and fixed the suits that were too small to completely cover the dogs running on set. Quay Tolsite also needed FX whenever he spoke so we added drips and steam that were triggered by the audio track.

The first meeting between Han and Chewie is a great fight. How did you enhance this sequence?

François Lambert: The scene involved a few CG characters; a CG chain and some wire removal and although it wasn’t a very technically challenging sequence, it was a fun one to work on because that’s how we finally find out how they meet!

Every Star Wars movie takes us to beautiful places. Can you explain in details about the creation of the various environments?

François Lambert: The Kessel mine was probably the biggest environment we had to create on this show. It is a triangular shaped open mine concept that had to feel like it was made by “alien technology” in a very geometrical kind of a way. We started with references and artwork made by ILM and after that they provided us with the model since the other shots were set in the same environment. The complexity came from the multiple camera angles, and how this would stitch with the live plates sowe had to upres and tweak the model to make it fit. After that we added additional details to support close up scenes. Various set dressing objects and machinery were created to populate the set in addition to FX simulated steam plumes and dust. We also integrated extras from various plates, adding characters that were walking, fighting and running on the multiples levels of the mine.

Joseph Kasparian: We generated several different environments that are seen throughout the show and all of them were developed differently.

The Spaceport sequence was modeled and textured in a more traditional fashion but it was shaded, rendered and comped in Autodesk Flame using its real-time capabilities. That sequence had a lot of haze and smoke in the live plate and required large-scale set extensions. When we presented our first versions that already contained all of the desired elements, which helped speed up the approval process.

The Falcon sequence takes place in a ship junkyard. ILM’s art department provided us with basic design geometry that we rebuilt and textured according to the approved artwork. We created the scene assembly, added different levels of detail and created specific variations for the different shots in the sequence. We used Arnold for the final render but we also used Autodesk Flame to help develop the look in real time with hazing, depth cueing. Some lighting enhancements were done by loading the high- rez model into the comp software and by adding specific light to give it more style.

Can you explain in detail about the creation of the Wall of Fire?

François Lambert: To save the crew Qi’ra goes back inside and comes back with bombs. At that point Ron Howard suggested that we replace the explosions by a “wall of fire”. Although it came later in the process, we were able to create a chain reaction of explosions and it turned out to be very convincing!

Since there were a couple explosions on the plate’s photography, we used them as references and worked on details and simulations that matched the types of explosions. Once our technique was in place, we started designing a plausible trail across the set that followed the pools of liquid. Working with references of oil on fire over water, we spread our fire trail over the 6 shots, igniting fireballs in its path. We then comped the FX passes through the real live plate’s explosions to complete the task and get some of live plate’s details in there.

How did you manage this massive simulation?

François Lambert: We used Houdini for our simulations and renders. We made three to four bundles of simulated fireballs we could re-use and re-time from one shot to another to get the right progression and continuity. We basically wanted to simulate the longest shots with pre and post roll, then run it with the different camera angle.

Let’s talk about the Millennium Falcon. What was your feeling to work on such iconic ship and can you explain in detail about your work on the Falcon?

Joseph Kasparian: It was great! It was fantastic to work on such an iconic ship! The model and textures were sent to us from ILM. We started by publishing the asset in our pipeline using Arnold as the main renderer. We did the layouts of the ship for all the big shots then sent the camera to ILM to have them render the elements that would be sent to our 2D department for final comp. Some parts of the Falcon were actually built on set so we often had to adjust our model to fit perfectly into the live action one.

François Lambert: Additionally, we had to create a set extension for the Landing Pad and Mine Breakout sequences. Only a quarter of the set’s Falcon was built, so we had to complete the ship. The CG model had to be tweaked to match the plate’s photography. The physical built was slightly different for the proportion.

Which sequence or shot was the most complicated to create and why?

François Lambert: There was one particular shot in the final showdown between Enfys Nest and the Crimson Dawn crew, for which we had to create FX simulations for a massive sand blast, triggered by Enfys powerful weapon. The effects set on 2 shots were designed and shot to be in slow motion, with a speed ramp on impact. This included slow motion sand, dust and particulates shooting towards camera, with a subtle blue electric shock wave effect. Artwork and references were provided by ILM, but we had to design how this would translate when everything was in motion. It was quite tricky to create since most work had to be simulated before we could actually review and comment. At some point near the end of the project, it was decided that the speed ramp was too different from the Star Wars Universe so we changed and adapted our FX simulations for real time speed. In the end, it ended up being better for the movie so we feel it was the right decision. But it was a challenging task nevertheless, and we’re very proud of the result.

What is your favorite shot or sequence?

Joseph Kasparian: So many of L3’s shots were incredible because of Phoebe’s performance. Keeping as much of the live plate as we could truly brought photorealism techniques to another level. But for one of my favorite shots—for which we didn’t do that much work—we erased a stunt man in a green suit inside a small robot box smashing everything on top of a control panel.

François Lambert: My personal favorite is the Mine Breakout sequence; we completed over 150 shots for that space battle. It’s a suspenseful, action driven (and sometimes sad) moment in the movie, with blasters shooting, explosions, robots and… Wookies! That’s what STAR WARS is all about!

What is your best memory on this show?

Joseph Kasparian: From the ground breaking techniques of L3 to the set extension look development in real time, the different variety of effects and amount of shots we produced for the show made the Hybride staff very proud to have had the opportunity to work on a movie of that scale. The relationship with the entire team at ILM was amazing and their trust in our plan/schedule from early on made the process of delivering finals every week smooth and efficient.

How long have you worked on this show?

9 months, from August 2017 to April 2018.

What’s the VFX shot count?

We completed 413 shots for the show.

What was the size of your team?

A total of 115 artists worked on the project.

A big thanks for your time.

// WANT TO KNOW MORE?

Hybride: Official website of Hybride.

© Vincent Frei – The Art of VFX – 2018