In 2013, Chris Harvey explained to us the work of Image Engine on ZERO DARK THIRTY. He then worked on FAST & FURIOUS 6 and R.I.P.D. Today, he talks about his work on CHAPPIE.

Image Engine worked on all movies of Neill Blomkamp. How was this new collaboration?

Personally I did not work on Neill’s other films at Image Engine, so its tough to say how it was different exactly, though I guess that was a difference in itself 🙂 In terms of shot count this film actually had more, both overall but also in terms of the number of shots handled at Image Engine.

I served as both the internal VFX supe for Image Engine but also the Overall VFX Supe for the entire show, overseeing work at all facilities. And as far as the collaboration itself, I think it was great. Neill and I seemed to share a pretty common vision and taste in terms of the visual effects work and developed a great relationship with a lot of mutual respect. Neill is a very collaborative filmmaker and would say that beyond just myself the artists themselves really felt that collaboration with Neill.

What was his approach for the visual effects?

Thats a very broad question really and could be answered in a number of ways suppose. Obviously he is very VFX savvy having come from that background himself. But that alone is not what makes him such a good director to work with coming from a Visual Effects perspective. Neill knows what he wants. I mean he really knows what he wants, things are incredibly thought through in his mind… way before ever going to camera I think he has a pretty clear picture of what its going to look like in the end. This makes the approaching the VFX very proactive. We are able to really think things out, plan, and anticipate the issues early. He is not one to just jump at “cool new tech” just because its a possibility. And while he trusts your final call in an approach he will also question it to make sure that its worth his time… a good example on this film is the absence of using any actual Motion Capture. Most people on a film where the lead character is going to be entirely digital would never even question the use of mocap, it would be assumed…but not with him. And in the end after testing it thoroughly we chose not to go that route, and it was the right choice. Its that mentality of questioning and choices needing to have a purpose that will drive the story and overall filmmaking process forward thats permeates everything he does. And in the end it allows you to do a lot more with a lot less.

Can you describe one of your typical day on-set and then during the post?

HA, there is no typical day on-set! But here goes: A typical day actually begins the day before… as the end of the shoot day Neill and the heads of departments (including myself for VFX) will do a walk through of what he has planned for the next day. At that point it allows us to bring anything up or discuss various considerations we may have that affects other departments. After that I will meet with my team and review with them what we will be dealing with the following day. Once the day begins the VFX team usually arrives early. This allows us to get a head start collecting various data before shooting begins, surveys, textures etc.. Once shooting begins we continue to collect data, camera, set, texture, lighting, cyber scans, FX elements (whatever is relevant for that particular day)… usually we are running pretty hard. I also spend a fair bit of time beside the DP sides watching the shots and making sure nothing unexpected is going on. Occasionally I will offer Neill ideas on things he could try related to story driven by VFX. Depending on the day I might also be showing Neill quicktimes or images of things that are going on back at the facility (early look development shots, asset builds etc…).

Once shooting wraps the VFX team would meet back at the hotel to review and correlate all of the material we collected throughout the day, usually thats another couple hours. That usually wraps the rest of the team and at that point I can start downloading and reviewing (and occasionally have cineSync reviews) material thats being sent to me for review by the artists back in the facility. Get a few hours sleep and then we are back at it again 🙂 I also like to stay pretty in sync with whats happening back at the office so I during slow weeks for VFX I would take the “short” 24 trip from SA back to Vancouver to check in with the crew there, push things and relay first hand the goings on in the shoot. It probably sounds like I was going like crazy and I suppose I was but no harder than the rest of the team out there, it was an incredible group of people!

After shooting wraps we are all back at the office and things get a little more “routine”. Comp dailies first thing in the AM, meetings with leads to discuss the weeks goals and progress, rounds, animation dailies then another set of dailies (comp and lighting) mid afternoon. Various meetings and check-ins throughout the day and on scheduled days reviews with Neill in the early evening (after mid afternoon dailies). And then once the other vendors came on I would usually do a couple daily sessions with them mornings for the cineSyncs and early afternoon for the local companies. And throughout the day each department is often having their own set of dailies and reviews etc… everyone is running in a sort of synchronized organized chaos… haha. Days are pretty full and the team often jokes about me being ADHD always needing something new to review, we move pretty fast… I hate wasting much time in meetings or even dailies for that matter. Joking aside though, everyone has targets, goals and a plan to get there and we drive forward to that hard and try to get out of the office with as little overtime as we can so we can all still have and enjoy a life outside the work. I personally hate OT and think an abundance of it is a result of bad planning and it in turn results in mistakes, low moral and less creativity.

The movie features two robots, Chappie and the Moose. How did you work with the art department?

Actually while people probably think of only two robots it was a bit more complicated than that, but I will go into that more later. As for working with the art department on them it wasn’t really the art department that worked on the robots, it was a few concept artists, Weta Workshop and some of the artists at Image Engine that were responsible for Chappie and the Mooses creation.

We did work with the art department on other things though. Primarily when our paths crossed with things Chappie has to learn or do. For example the scene in which Chappie paints the old car. There was a lot of back and forth between myself and Production Designer Jules Cook about what and how the painting would be created, look, and shot.

Can you tell us more about the design process and the work with Weta Workshop?

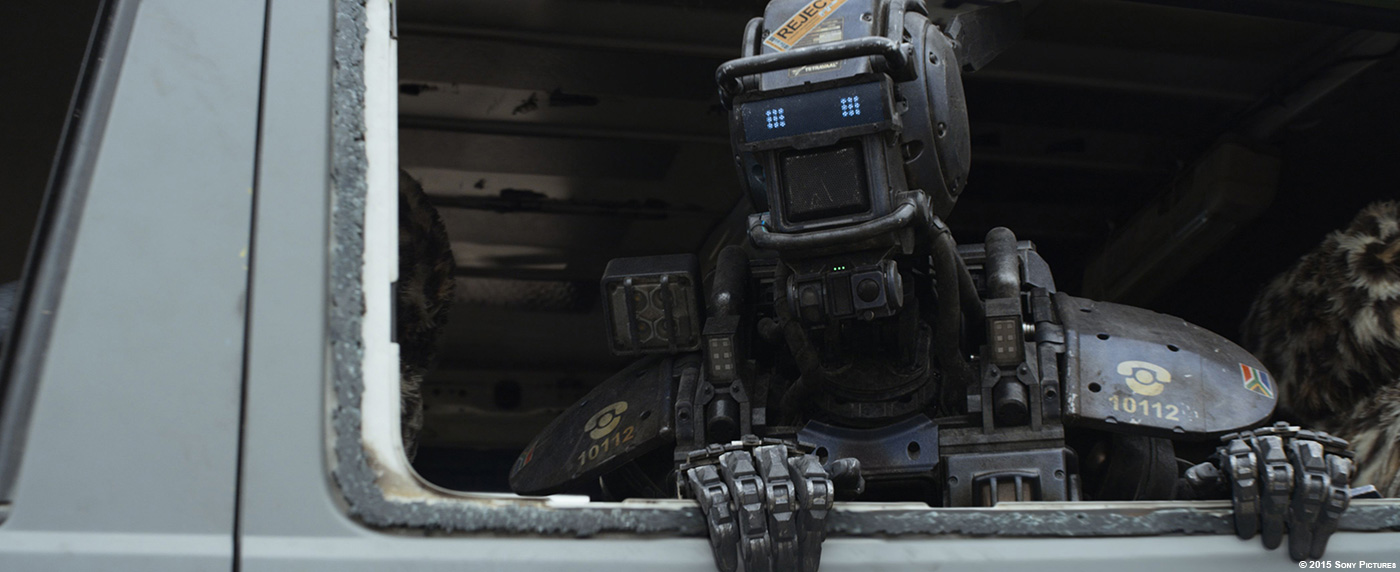

This was actually a lot different than other projects and was a great experience. Typically when there is going to be a practical build of something (in this case the robots, Chappie and the Moose) its the practical guys that build it, then us in the VFX world will have to scan and photograph and match to that. But in this case Neill wanted to go a different route and have us take the initial 2d concept work and do the initial build digitally and then have the practical guys match us. So we got involved a little over 6 months prior to shooting to start the digital builds.

One of the key aspects of this was to ensure the joint and movement design was going to work for the film and for Sharlto performance. We invested a huge amount of time working out the mechanics of how Chappie was actually going to physically function, mechanically Chappie really works, and its incredibly complicated. And since we knew he was the hero character and would be subject to shooting like any other lead character in a film the amount of model and texture detail was pretty ridiculous! Anyway, once the model was signed off on by Neill we would send the digital files over to Weta Workshop to fabricate through 3d printing and actual part sourcing. The over 6 month lead time might sound like a lot but when you consider they actually had to build this thing its actually pretty tight so we would send the models over in pieces, the arms, legs, head, torso etc… so they could get a head start. Then there would be the back and forth ensuring all aspects were correct from a physical manufacturing point of view (are the screws the size of real screws, would you really have a support pin this size, etc…

At the point of model completion Weta Workshop took over… the surfacing was something we wanted them to tackle. We did color studies but the actual surface properties and treatment was something they lead. After all you can get more real that something that is real. When we got to the shooting location part of our prep was photographing all of their builds in very controlled environments so we could use that for the basis of our texture painting and shader look development. All told we probably spent at least 10 full days shooting textures and look-development reference photography in very calibrated and controlled environments (though sometimes very ad-hoc). These later fed back to the asset team, Mathias Latour (look-dev lead) and Justin Holt (texture lead) set-up up a calibrated and controlled environment to develop the look for robots so that when we later had to drop them into many different lighting scenarios we knew they would just work across the board without needing per shot and scene tweaks.

At the end of the day I would say it was a really great way to work, and had huge benefits and efficiencies and would definitely strive for this approach on future projects.

Can you explain in details about the creation of both robots?

These models were some the biggest single assets have seen, simply do the amount of screen time and scrutiny they would have to hold up to. As such there was a lot of time put into developing them. I will throw out some general statistics below as I already mentioned the general design process above… but first I wanted to go into more detail regarding there being more than just “two” robots.

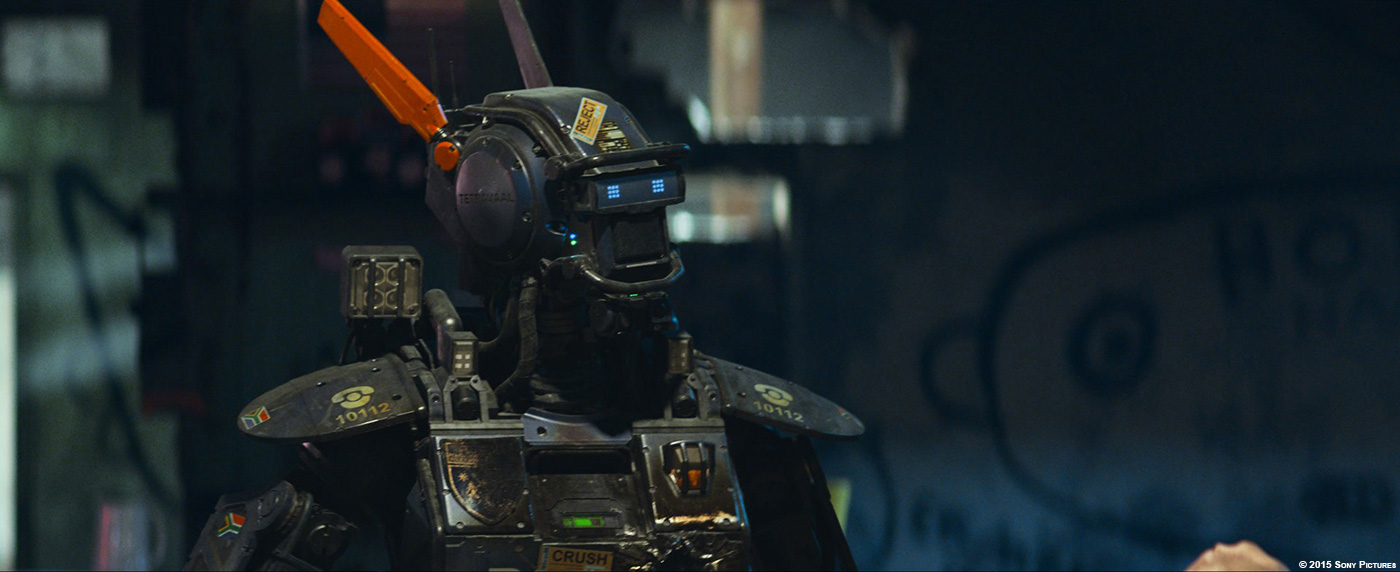

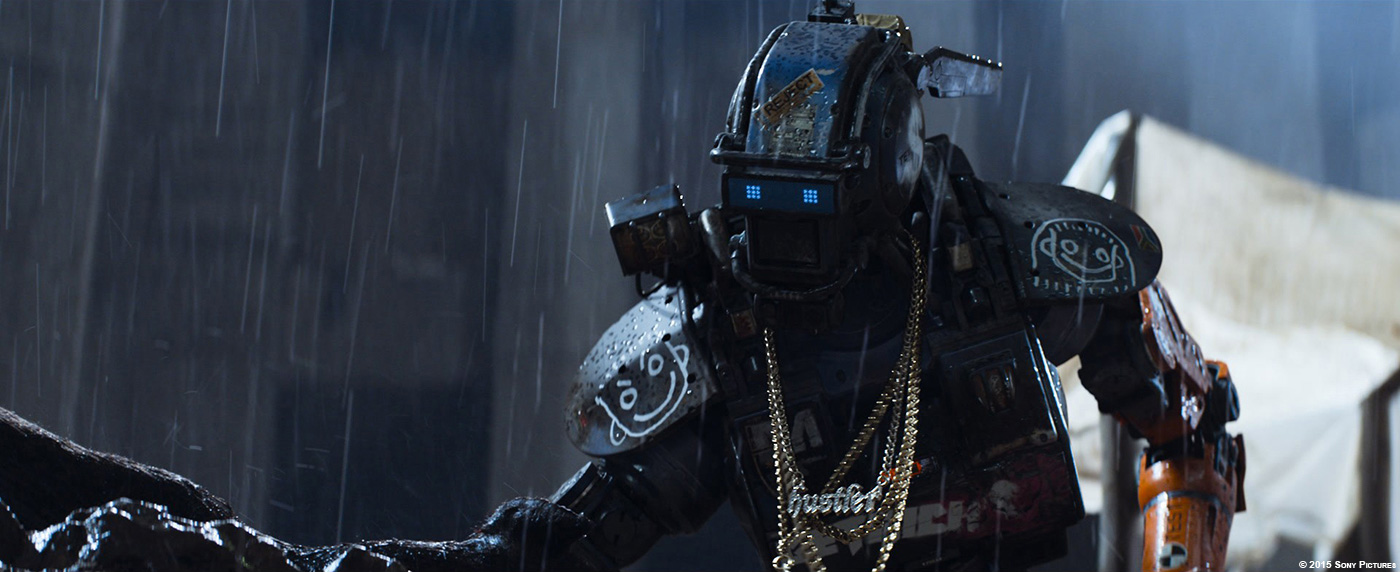

Chappie is a robot police Scout, all the Scouts are essentially the same. However obviously they all need differences to add a visual richness and believability to the film. Robot Deon is also a Scout, though he has an entirely different paint scheme as his model is a prototype model. And then of course there is Chappie himself, he goes through a lot in this film, gets shot, head opened up, arm cut off, firebombed, spray painted, shot to hell, dressed up with bling, and later finally “wakes up” in a totally different vandalized police Scout.

All told there are 8 main stages he has to go through and another 12 sub-stages. Each of these could require adjustments, tweaks and totally new textures, shaders and models. While technically this meant we had roughly 30 different versions/assets of Chappie/the Scout because from a story point they all came from the same factory there were obviously a lot of shared components. It would have been a nightmare to try and handle and track each change and then make sure every other version got the same update propagated along so instead we treated Chappie as an UBER asset. Every single texture, shader, model, and rig adjustment for every single version road along with this single uber asset. We then used shotgun and our internal database Jabuka to automatically flip the correct switches to toggle on and off the various configuration files that would put this uber asset into the appropriate state for any given shot. It was a complex system but ended up being incredibly effective and streamlined. The same method was taken for Moose but he only had 8 total sub-states. We also came up with some specific comp tools to handle his “lip-sync” or LED light bar and handle all dialogue animation in compositing. This saved us every time the dialog changed (and this happens in the last week with ADR) because we didn’t’ have to go back to animation or lighting and re-render but could just handle the changes quickly in comp.

Now for a bunch of stats:

CHAPPIE MODEL

- Original state poly count (pre-subdivided): 3,226,042

- Final state poly count (pre-subdivided): 3,976,511

- Objects: 2740

- Roughly 400 bolts

- 40 pistons

- 161 wires/hoses

- 99 percent water tight for 3D printing (remember this thing had to actually be manufactured)

MOOSE MODEL

- Poly count (pre-subdivided): 6,313,018

- Objects: 2785

- Roughly 1000 UV tiles

- Over 7000 bolts

- Roughly 100 wires/hoses

TEXTURE

- total number of texture maps for ALL versions : 56148

- number of UDIM texture tiles for Chappie : 538

- texture resolution per UDIM tile : ranges from 256-4096

- total texture memory size on disk for all : 152.3 GB

NEW PROPRIETARY TECH

- Advanced shader layering system that allows for realistic soft transitions between material properties.

- Photoshop-like adjustment layers allowing for globally affecting the various shading components from underneath layers.

- Auto-sim and qc renders of necklace and cable sims.

- A new physically-based geometry-lighting pipeline.

- Custom audio setup to drive the mouthlight from Sharlto’s audio track, done at comp stage so it can be updated for last minute ADR changes.

I supposed some of this might seem extreme but remember Chappie had to hold up to close to 70 minutes of screen time across 1000 shots with any variety of camera framing and composition and Neill didn’t shy away from closeups!

Can you tell us more about the rigging of Chappie?

As discussed above we got involved very early on with this process just to make sure that we knew what Sharlto was going to do would later translate to our digital hero. Neill and I made this mandate that Chappie have no cheating with his jointing, that everything must actually be functional and as an added bit that there be no ball joints! It was a very tall order for the rigging and modeling teams to sort out. Jeff Tetzlaff (the model lead) and Tim Forbes, Markus Daum, and Fred Chapman (our rigging team) would go back and forth sorting out the minutia of getting it all to work. And in the end needed to have our RnD team write an completely new and custom IK solver to handle the complicated jointing structure we came up with.

Further to that used cyber scans of Sharlto to line up joint placements and to figure out the mapping from Sharltos performance on-set to our digital asset. In fact the Chappie rig asset carried with it a Shartlo can so the animators could toggle Chappie off and look at instead a digital version of Sharlto to ensure they were capturing correctly aspects of his performance and his spatial position within a shot.

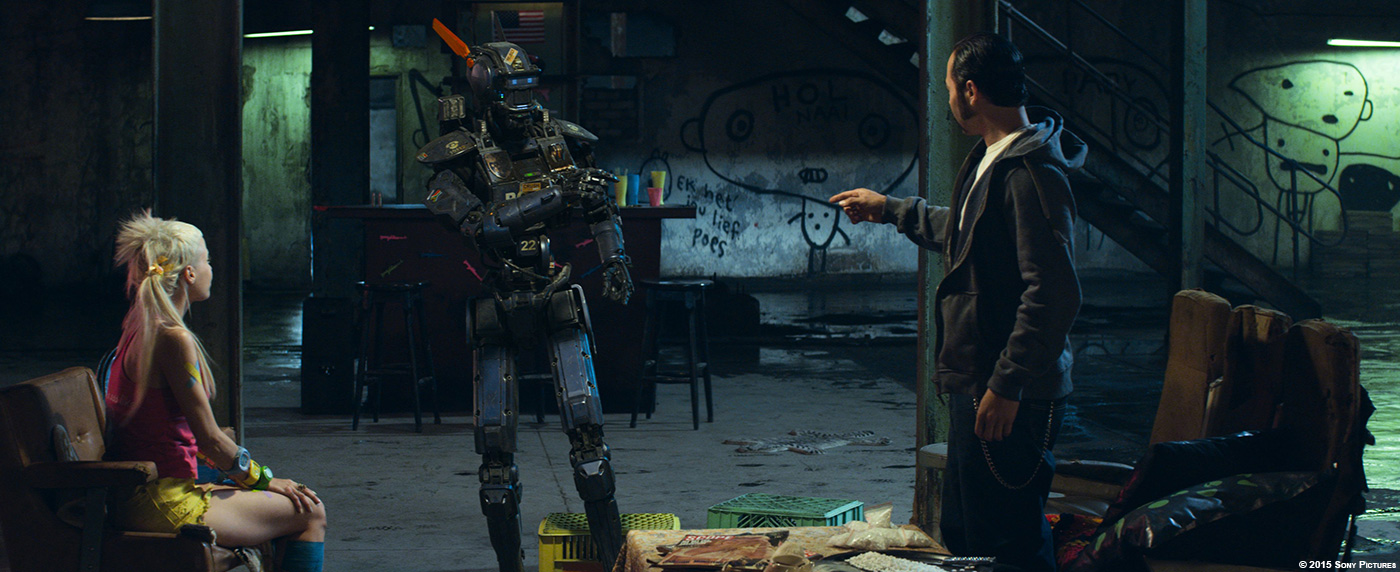

We also had Weta Workshop manufacture a series of costume pieces for Sharlto and the other stunt performers to wear. Essentially the built to scale Chappies chest and back (with removable shoulder flaps) on a motocross chest armour. This would restrict certain movements of the performers to that which Chappie would be capable of with his specific dimensions and also provide accurate contact points for other actors who touch him.

Finally on top of the base rig all sorts of little mechanical things were driven dynamically (with manual over-rides) so you would get the little ticks and knocks that you might expect in gears, levers, hoses etc… on something mechanical.

The animation of Chappie is really impressive. How did you work with your animators to achieve to this great result?

Well contrary to common belief we did not use motion capture. Every movement on Chappie is 100% hand keyframed. Obviously this all began with Sharlto. He was/is Chappie and performed on camera. It was his excellent performance that the animators essentially used as law, mimicking his movements. However a human movements and emotion do not always simply translate to the robotic Chappie and we had a great team of animations, lead by Animation Supervisor Scott Kravitz and his leads, Earl Fast, Jeremy Mesana, and Sebastian Weber. They would constantly be adding subtleties into the robots mechanics, interpreting/directing the facial performance and looking for ways to better translate Sharltos acting choices onto our digital Chappie. At the end of the day it was a very talented group of people, my hats of to them… match-animation is not always thought of as glorious work, and then took it was past that and I think helped to created a character people will remember.

I should also mention that without our matchmove and tracking team lead by Mark Jones the animators would not have had the solid base to begin their work.

Did you received specific indications and references for the robots animations?

Not specifically, I mean the goal was to match Sharlto’ performance so that was the reference. In terms of mechanics etc… we sourced all kinds of things, the robots that Boston Dynamics are creating (and other places as well), we even looked at manufacturing robots on assembly lines for reference and inspiration. For the Moose, Neill often referenced tanks, since Moose is essentially a walking tank.

Sharlto Copley is playing Chappie. How did you work with him?

Sharlto is awesome, not just in his performance as Chappie but in his attitude towards visual effects. I am sure you have seen some of his photos and interviews in the media where he wears this custom shirt with all the VFX artists names on it as he likes to point out it was all of these “unsung heroes” that helped bring Chappie to life… huge respect for him.

On set he and I talked about things he might be able to add to his performance that would help it read better for the animators… he was always conscious of the fact that there was another step after his scenes were filmed and he wanted to make sure that what he was trying to convey would translate. After shooting he came by the office and walked through and met the team, it was really great.

Can you tell us more about the lighting and compositing work for Chappie?

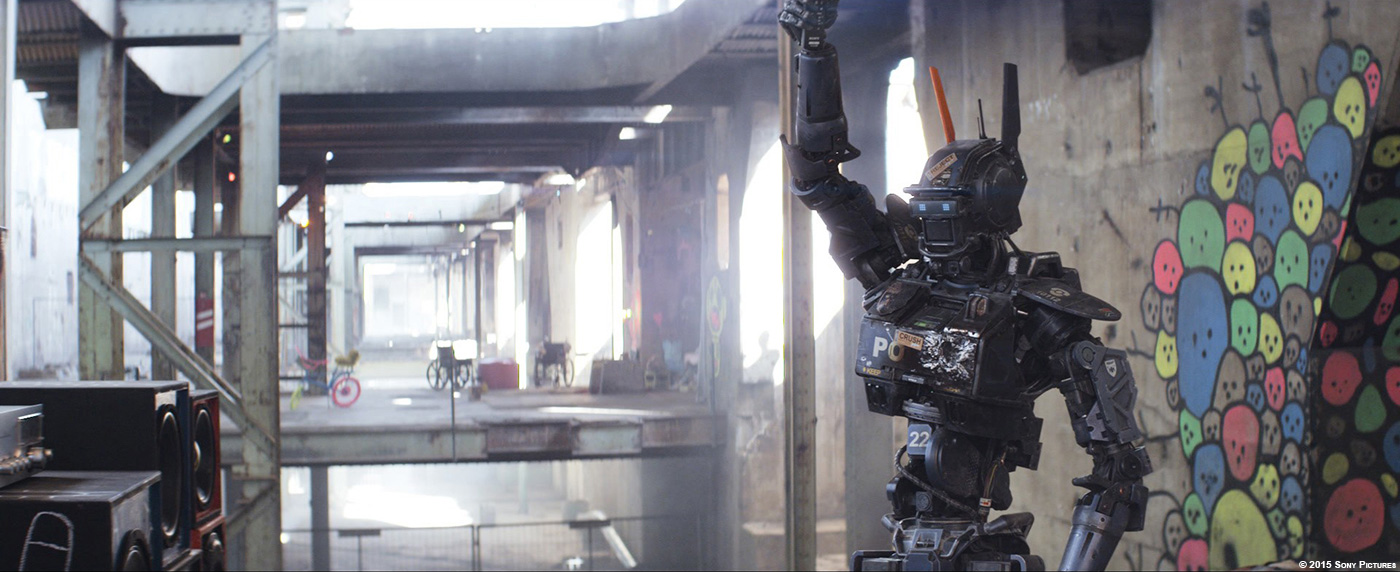

The lighting team lead by lighting supervisor Robert Bourgeault did an incredible job. For Chappie I really wanted to make sure that when he was lit we would “feel” him moving through the volume of space he occupied and for that we decided that even though we never had to replace or extend any environments we would need to create accurate representations of them. Instead of a simple spherical HDRI image based light set-up we wanted all of that image-based lighting to be projected from geometry and lights that occupied the correct physical space. The results while subtle add a real sense of nuance and tangibility to his physical presence in the world. To achieve this lighting really began with the data collection on-set. Typically you will grab a grey ball, an HDRI and a color chart… we got a lot more. We took our HDRIs from dual height (this allows you to triangulate objects in the environment, light positions etc..) as well as along paths of movement. So if Chappie for example walked through the room we would take multiple HDRIs along the path of movement… this gave us a lot more accurate data for re-projecting and really allowed Chappie to “move” through the light. We also photo-scanned the locations so we could together with survey data reconstruct low resolution representations of the set. The lighting artists would then set-up scene and environment based light rigs that we could run through a series of shots. There was about 25 scenes with approximately 70 environment set-ups. These base lighting rigs gave startling good renders right out of the gate which allows our lighting artists to spend the time tuning each shots, and making aesthetic choices like you might on-set if Chappie were really there… and turn all that around very quickly.

In terms of comp, Shane Davidson supe’d that team and as mentioned they were getting great results from the lighting department but obviously they had their work cut out for them as well… making sure everything would integrate back into the shots as tightly as possible. It should also be noted that the BG prep team lead by Casey Yanke did a tremendous job removing Sharlto and the other grey suit actors from the shots… a monumentous task. Aside from all the usual integration aspects involved in any compositing of CG into plates the biggest work was always where there was heavy interaction between Chappie and other cast members. Contact work is always laborious, but it can also really help sell the physical presence and so for Chappie I really encouraged performances on-set to not shy away from it so there was no shortage of difficult work for the comp team. It would often involve warping, cutting up of arms, repositioning, contact reflections, shadows any trick they could throw at it to get everything to sit.

There is a beautiful slow motion shot of Chappie on fire. How did you created this shot?

Chris Mangnall.

No seriously, Chris Mangnall… he is this crazy genius of an FX artist and was responsible for the fire effects in the film (among other things). His CG fire is some of the best I have ever seen… when we compared it side by side to the plate photography is was uncanny. Which means that yes, we did have plate fire… we actually through a fake Molotov cocktail at a stunt guy and lit him on fire, it was awesome. At the end of the day we couldn’t use it for various technical and performance reasons but it gave us a 1:1 target to aim for and Chris nailed it.

Can you tell us more about the various FX and destruction?

This wasn’t really a show with a ton of FX destruction, however FX did play a significant role in the film but more in a supportive way that FX is often called on to be. Greg Massie (FX lead) and his team were repeatedly called on to add nuances for environmental integration. Moose obviously “stirred” up the environment a lot but Chappie also required similar work. They did a variety of things dust, water, rain interaction, bullet shells, digital squibs (dust, dirt, mud, brick, etc…)… the list goes on.

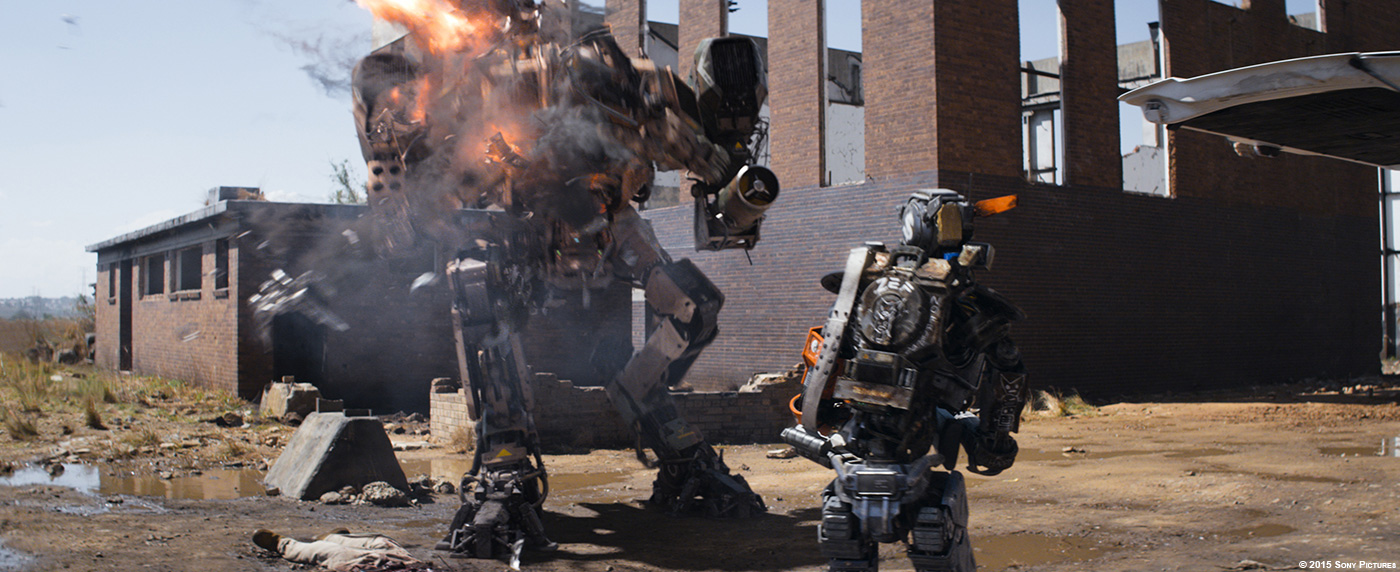

Obviously there were also key hero FX shots (like the hero slow motion fire shots you mentioned) and much of that involved dynamically changing, bending, and fracturing geometry and heavy volume simulations. Some of these include the RPG to the chest and brick wall, Moose and Chappie being shot and torn to pieces and obviously the final Moose destruction.

Something that it likely completely overlooked a FX work, that actually really added to Chappie’s personality were his chains. Simulating those approximately 5500 individual links of chain across roughly 450 shots also fell to Greg and his team. Greg personally oversaw the set-up of this system and it was slick, so slick in fact, that the bulk of those 450 shots were handled by a single FX artist. There were other bespoke chain shots that required very shot specific tailoring… the most obvious is when Ninja initially places the set of chains over Chappie’s head. This simulation was so complicated with so much live action/CG interaction that I think in the end it took close to 300 differently wedged simulations to get it to behave… all worth it based on audience reception to that shot though!

What was the main challenge with the Moose?

Well beyond the usual challenges I guess its one of reference really. There are not a lot of 12 foot tall walking tanks around to go out and film. But seriously just finding that balance of reality, intimidation and scale was something we really worked a lot on across all the departments.

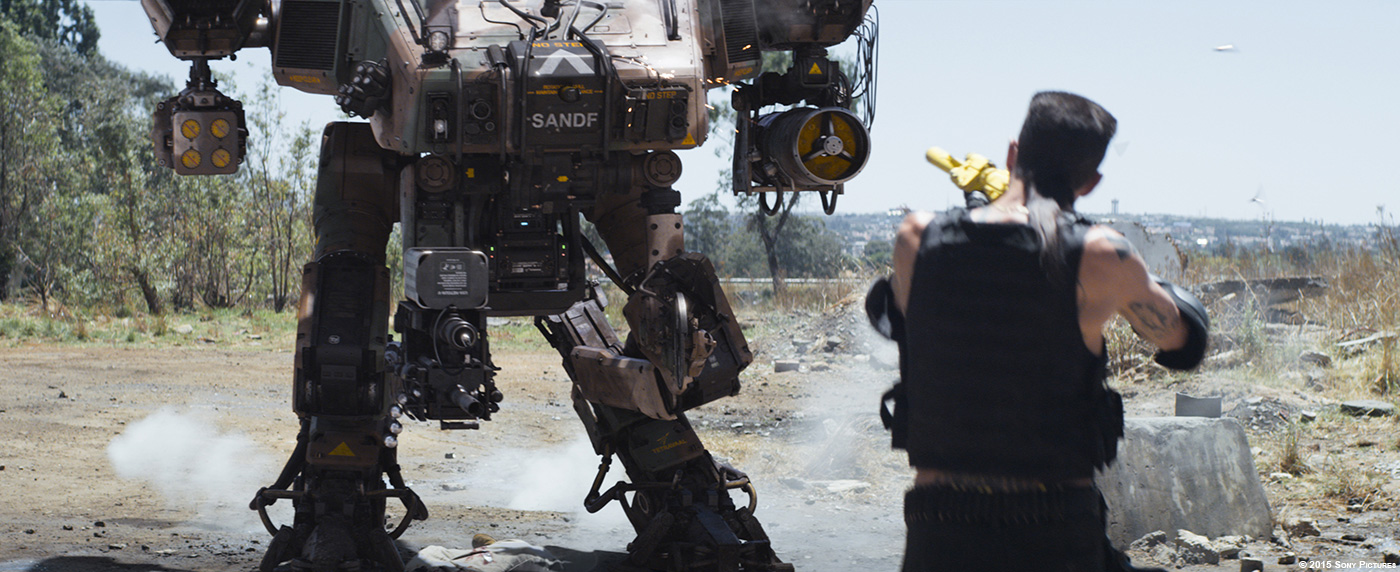

How was simulated the presence of the Moose on-set?

This was up to Max Poolman (special effects supervisor) and his team. They like to making big explosions down there… haha. But seriously we would try to add as much as we could practically to the shots. Squibs, blood, explosions, mortars, fire, wind machines, anything we could to help to add authenticity to the footage. We would did a few small element shoots with a second or third unit and finally we did a very full day of elements back in Vancouver much later in the schedule of very shot specific needs.

In terms of “performing” Moose, well we couldn’t very well have a guys in a grey tights representing Moose on-set. We did have a large plywood cross-section of him made that we used occasionally to just give the actors a sense of volume. But in the end Neil Impey, one of our VFX crew members, would stand-in with a 10 foot pole with a tennis ball on the end and block out the Moose’s position during rehearsal. The intention was to have him out of shot when we would roll but the actors got so used to him being there on their request we left him in… so the BG prep team had to paint him out of a lot of shots.

The Moose can fly. How does that affect his animation?

In terms of his animation when he is on the ground it doesn’t affect it at all. However there was a lot of back and forth spent when working out how he would look when flying. How is legs would hang, how maneuverable he could be, jitter etc… One thing we added to him in general but it started with flying was a sense of vibration that ran through him, not just shaking his parts but warping the paneling. The frequency and amplitude could be adjusted by the animators as needed.

How did you split the work amongst the various studios?

The work was primarily split between 4 facilities.

Image Engine: carried the bulk of the work (at over 1000 shots) and was responsible for all of the robot shots along with a few other things.

The Embassy: handled all the of the POVs for Scouts, Moose and Chappie and these had to also carry story driving elements as well. They also dealt with some last minute security door enhancements.

Ollin: had quite a large number of miscellaneous monitor comps, squibs, and other work.

Incessant Rain: handled mostly clean up work, wire removal, crew removal and so on.

Additional work was also done with Bot and Yannix for paint/roto and tracking respectively.

How did you collaborates with the various VFX supervisors?

Nothing too different here really… the usual phone, email, cineSync and in the case of The Embassy who were literally a block from me in person. One important thing to note here is that production hired an external VFX production team (separate from Image Engine) The Creative Cartel. Dione Wood the VFX producer production side would wrangle these other vendors for me, making sure I got the material and coordinating the reviews etc. For Ollin reviews we used their “Joust” software which hooks into/acts like cineSync.

What was the biggest challenge on this project and how did you achieve it?

Chappie… he was the focus for us all. There was no one single thing that was going to make him work..and it wasn’t just about making something CG fit into the live action plate. Sure he had to look real, but the real trick was getting past that and to the place where the audience would hopefully totally forget about him as a digital effect and cross over to a place where they bought into his character, his emotion and get caught up in what he was feeling. That was the trick we were pursuing.

And like I said, there was no one thing that achieved it. From the fantastic concept art to the incredibly focused efforts of the asset team and practical builds from Weta Workshop, the soul of Chappie given to us by Shartlo’s performance, the on-set crews and camera department, Neill Blomkamp’s direction, our layout and BG prep teams, phenomenal work from the animators, and the scrutiny of detail in the lighting and compositing departments.

Chappie is a sum of his parts.

Is there any invisible shots you want to reveal to us?

Not really, I mean sure there was invisible paint-outs, monitor comps etc… but the primary work always involved the robots, so in that sense its sorta obvious. I have been asked a lot if we used the practical builds for some of the extreme close-ups and such, but no… every single shot of the robots doing any sort of performance at all was entirely digital. There are a couple all CG shots in there as well due to not having the right plate photography, but those are one-offs and not what you might think so I will leave it for people to see if they can find them 😉

Was there a shot or a sequence that prevented you from sleep?

Not really… there were a couple of shots that were down to the wire and I would often push on things right up until the last moment… but I don’t think I ever lost confidence in our ability to come through.

What do you keep from this experience?

I probably say this in every interview I have done with you… its the people, the relationships. But this one was different… it was more than a team on this one, it really was a family. Even on-set people would comment on the camaraderie of family… it was a very special project to be a part of.

I would also say that this show really reaffirmed just how important having a plan up front is. That’s an obvious statement to make but you might be surprised how often its not the case in film production. Spending and investing that real hard time up front will always make things smoother later down the pipe… what we achieved on CHAPPIE is certainly a display of that.

Finally I would like to echo something I believe Phil Tippett said, we need to stop always looking for “what’s wrong with a shot” and shift our focus to looking for “how to make it better”… there is a difference.

How long have you worked on this film?

Just over 2 years

How many shots have you done?

A little over 1300 total, about a 1000 of those being Chappie.

What was the size of your team?

I can’t speak to every facility but at Image Engine roughly between 150-200 including all production and support staff.

What is your next project?

I can’t talk about it yet.

A big thanks for your time.

// WANT TO KNOW MORE?

– Image Engine: Dedicated page about CHAPPIE on Image Engine website.

© Vincent Frei – The Art of VFX – 2015