Grady Cofer is working in VFX for over 15 years. He participated in many projects at Digiscope as an Flame artist such as VOLCANO, TITANIC or GODZILLA. He then joined ILM and worked on films like PIRATES OF THE CARIBBEAN trilogy, STAR WARS EPISODE II and III as well as STAR TREK and AVATAR.

What is your background?

I have been working in the VFX industry for 15 years. My passion for filmmaking began when I saw STAR WARS as a young boy. Not only was I swept away by the imaginative story and fantastic imagery, but I became fascinated by the mechanics of how such imagery could be created. This fascination stuck with me through the years, as I gravitated towards anything having to do with computers and graphic design. I dabbled in various 3D applications, but my VFX career ultimately began when I became a Flame artist, compositing shots for movies like TITANIC and GODZILLA. Then joined Industrial Light & Magic, and worked on STAR WARS EPISODE 2 and EPISODE 3, THE PIRATES OF THE CARIBBEAN TRILOGY, STAR TREK, AVATAR and many others.

How was the collaboration with director Peter Berg?

I first heard that Peter Berg was planning to adapt BATTLESHIP for the screen back in 2009. I went to his office in Los Angeles to meet with him – intrigued but skeptical. When he walked in, he picked up a chair, set it down in the middle of the room, and proceeded to pitch the first thirty minutes of the movie. And I was hooked. That was the beginning of a three-year collaboration.

We knew that the scope of VFX in BATTLESHIP was going to be massive, and that a great deal of the movie was going to be created in post-production. My mission was to include Pete in every stage of the process, and to guide him through the long gestation times that accompany complicated FX work.

We at ILM were able to make many contributions to the film, from designing creatures and weapons to pitching story ideas, and Pete was always receptive. But the main vision was all Peter Berg. He is a creative madman — the ideas keep coming. The best thing I could do as his VFX supervisor was to listen to him, and then try to come up with new and interesting ways to bring his ideas to life.

What was his approach about the VFX?

Pete was cautious at first. Early in preproduction, on a scout of the battleship USS Missouri, he said he wanted to have a VFX meeting at the end of the day. We met dockside, with the Missouri looming beside us. He pointed at the ship and said: “That’s real. I get that. I feel that. Your effects have be just as real and powerful as that.”

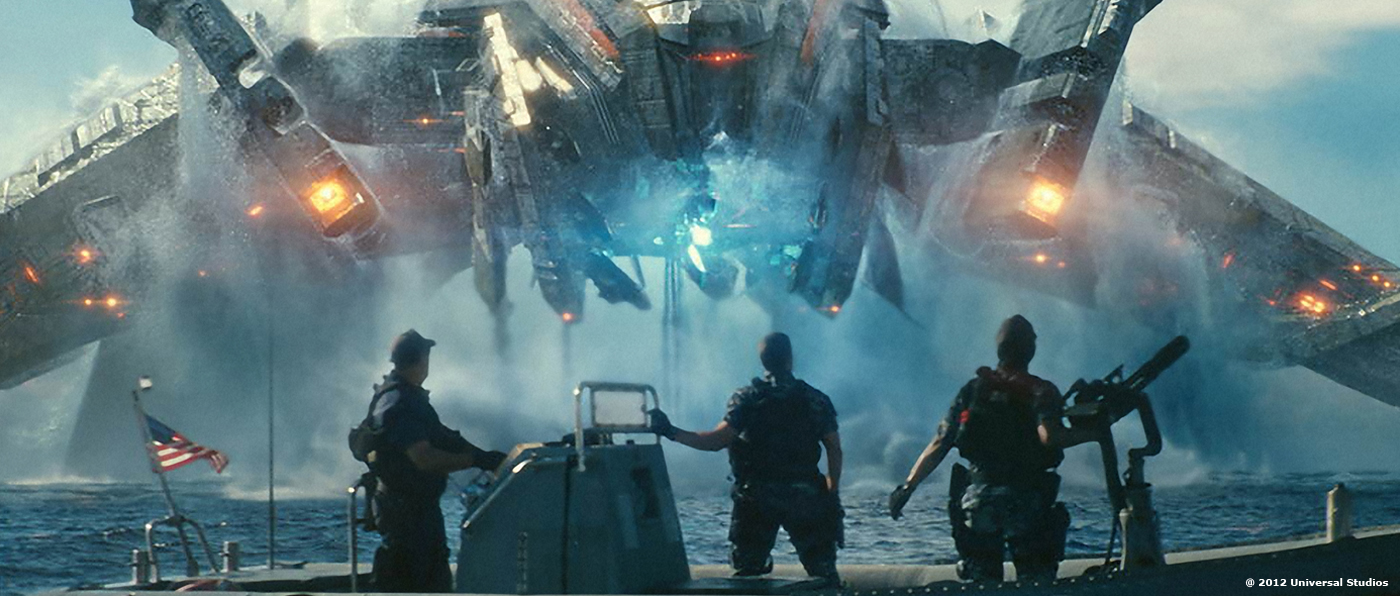

BATTLESHIP is a mashup: one part classic naval warfare film, two parts blockbuster action/sci-fi. And this pairing of the familiar and the fantastic helps define our approach to the VFX, as we constantly strove to ground the sci-fi elements in reality, to make our work as “real and powerful” as it could be.

How did you split the work with VFX Supervisor Pablo Helman and you?

On location, I worked on first unit and Pablo worked on second. Back at ILM, Pablo and I divided the sequences categorically. For the most part, I supervised the water-work and battle sequences, while Pablo oversaw the creature work although there was some cross over.

How did you recreate the U.S. Navy and Japanese Naval ships?

Authenticity was Pete’s unequivocal mandate. One important aspect of that was filming on the open ocean. He wanted to capture actual naval ships at sea, and not just for visual effect reference, but to put real Navy Destroyers in this movie. Real aircraft carriers.

During the 2010 RIMPAC maritime exercises, we had unprecedented access to the fleet of gathered vessels – filming both from helicopter and a camera boat. I personally had the opportunity to embed with a camera crew on an Arleigh Burke class destroyer. On board, I filmed a number of ocean and ship plates, and captured live-action reference of firing weapons.

The Navy gave us access to a number of Destroyers, providing ILM’s modelers and painters the reference necessary to recreate the ships in great detail. The USS Missouri was comprehensively LIDAR scanned while it sat in dry-dock. The resulting point-cloud data captured precise imperfections, including the dents in the hull from a kamikaze attack.

Can you tell us more about the Hong Kong sequence?

During the invasion, one alien ship crashes down into Hong Kong, tearing through the Bank of China, and splashing into the channel. The sequence was carefully planned and prevized. Our film crew shot scenes in the crowded streets and on the Star Ferry, while an aerial unit captured plates of surrounding buildings and the Buddha statue.

Back in Los Angeles, production designer Neil Spisak created an interior greenscreen set of the office space. Special effects coordinator Burt Dalton devised a clever rig for ratcheting the desks and chairs across the room.

Did the falling tower of Transformers 3 helps you with the one in Hong Kong?

I enlisted the talented team at Scanline LA to execute the Hong Kong sequence. We concentrated on differentiating materials (metal, concrete, glass), and varying the physics of the destruction based on the characteristics of each material. Stephan Trojansky and his team added a number of creative details to their simulations (notice the trees breaking thru the atrium windows as the bank tower falls towards us).

How did you design and create the huge force shield?

The weather dome was an important narrative device to isolate the Navy and alien ships into a three-on-three battle (and thus pay homage to the boardgame). It’s design began in pre-production, with production designer Neil Spisak. His illustrators created concepts of a force field perimeter.

ILM’s art director, Aaron McBride, painted a progression of reference frames, representing the creation of the dome. Then Scanline LA created the effect, using fluid simulations to make the barrier organic.

The aliens launch intelligent, destructive spheres at Hawaii that lay waste to everything in their path. Can you tell us more about their rigging and animation challenges?

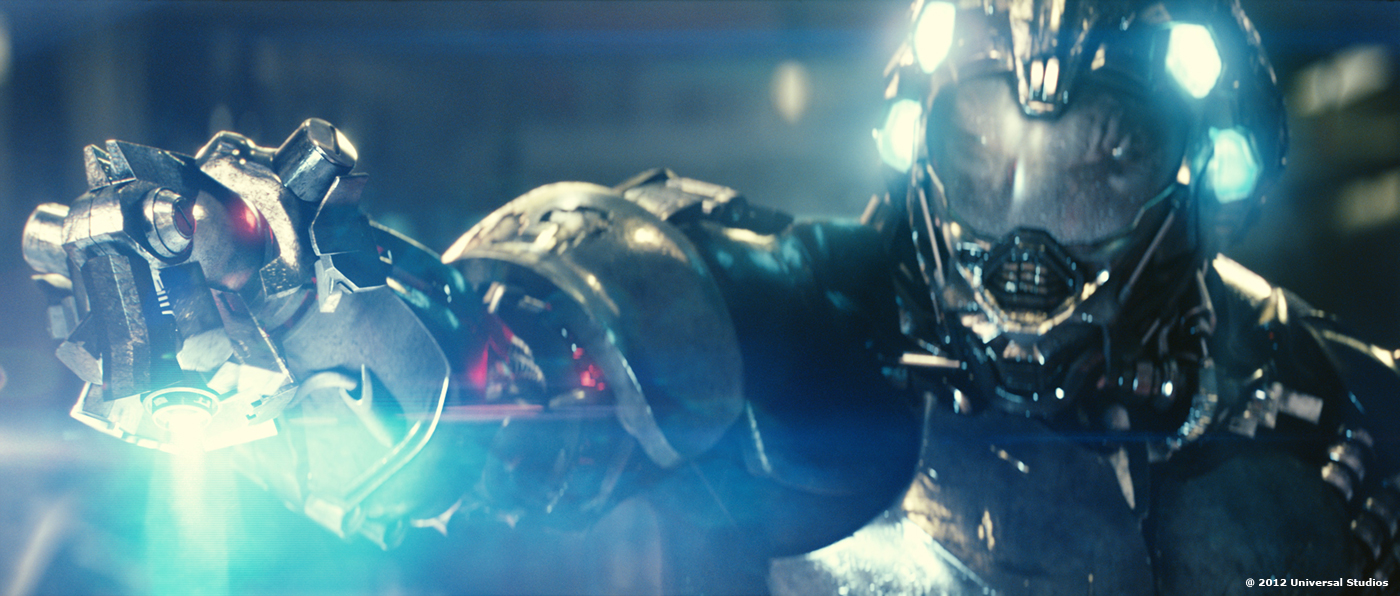

Pete had conceived of the Shredders very early on – unstoppable weapons that can be programmed to take out specific targets. Design-wise, they are like a series of chainsaw blades wrapped around a sphere. Pete wanted them to exhibit an incredible amount of speed and energy. The challenge was to imbue them with a bit of character. The riggers provided controls to telescope the shape out and in. Then each individual tooth could animate outwards to create more menacing silhouettes.

How was created the various big environments such as the military base or the freeway?

The helicopter-shredding sequence was filmed on location at the military base in Kaneohe Bay. Second unit had access to one helicopter, which was replicated. The entire environment was then photomodeled and recreated digitally for some of the virtual camera shots.

The freeway was first shot on location in Hawaii. Then a matching section of it was rebuilt in Baton Rouge, at Celtic Studios. The greenscreen set-piece was constructed to be shredded from one side to the other, with various cars being ratcheted in the air along the way.

Can you tell us more about the shooting process and the benefits of using ILM’s grey suits?

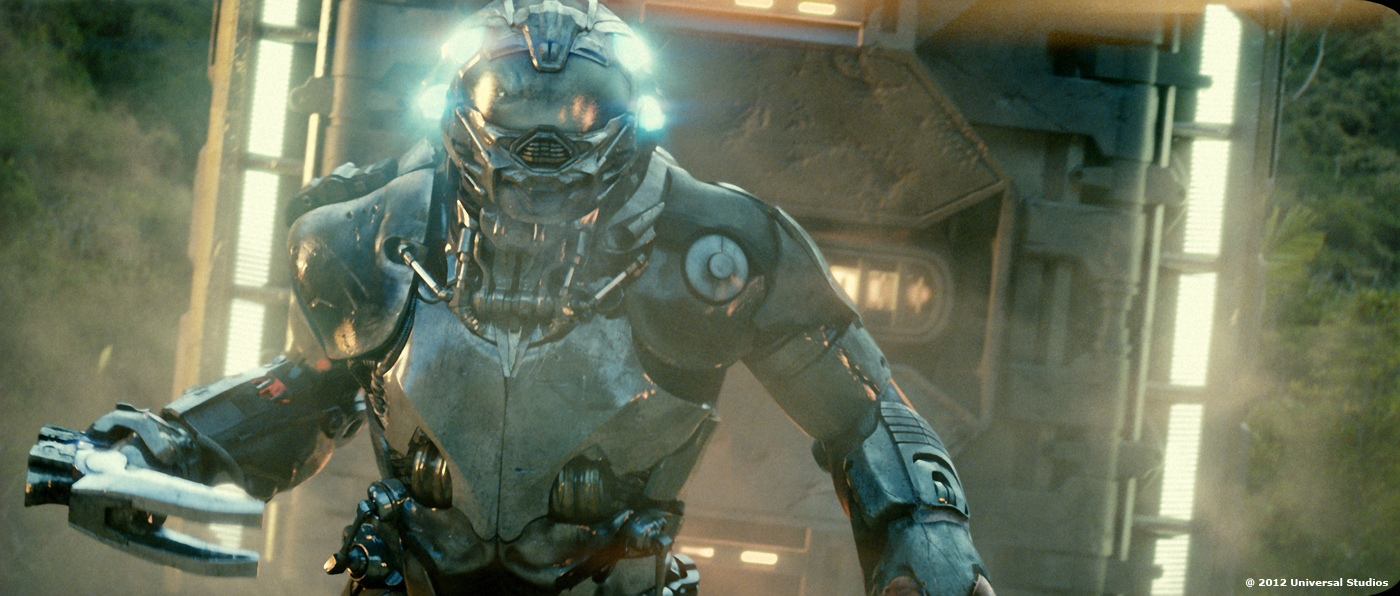

I believe that some of the most effective motion capture can happen during principal photography, on location, with all of the filmmaking ingredients: the director, the DP, the lighting, everything. The suits are part of ILM’s Imocap system, our patented on set tracking system for this kind of performance capture.

How did you manage the difficult task for the tracking?

For this show we were able to streamline the process — recording data from set using a single HD witness camera offset from the motion picture camera. When combined with production plates, the other data the system captures during the performance and the witness camera footage Imocap provides very accurate 3D spacial data.

How did you collaborate with the previz teams?

BATTLESHIP made extensive use of both previs and postvis. Two companies, Halon and The Third Floor, provided Peter Berg with impressively quick scene visualizations, allowing him to investigate creative ideas. For planning such a complex film shoot, with its plethora of complicated action set-pieces, pre-visualization was mandatory.

As ILM developed the assets, we would supply the previz companies with models and textures. And likewise they would send camera animation back to ILM as a starting point for some shots. They also supplied technical camera data prior to the shoot, to help inform the capture of VFX plates.

Can you tell us more about the design of the various aliens ships and armors?

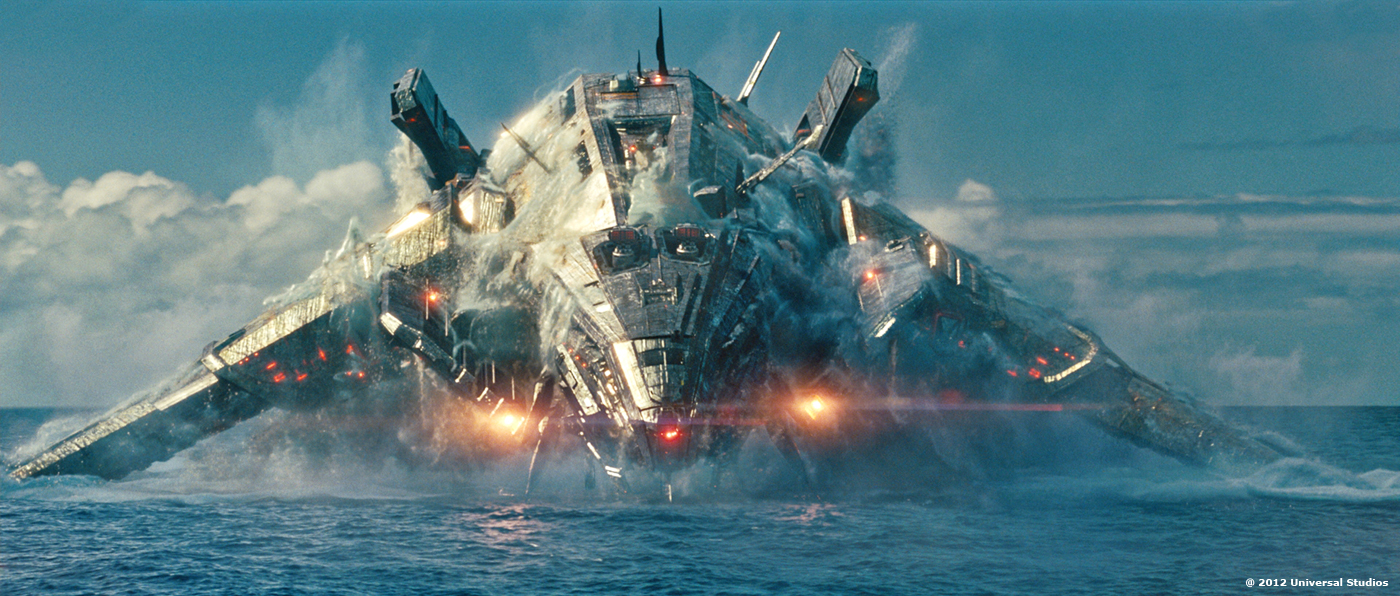

Production Designer Neil Spisak and Art Director Aaron Haye led a group of illustrators, generating pages and pages of concept art. The alien ships, called Stingers, were inspired by water bugs, which have the ability to stand and maneuver on top of a water surface. It was crucial to the Director that the alien technology feel practical, instead of merely ornamental. And for everything, Pete wanted a sense of age, of history – so when we encounter this alien race, the tools, the armor, and especially the ships feel used and worn.

Back at ILM, we created different silhouettes for each Stinger, varying aspects of their weaponry, defenses, and propulsion. And we customized each ship with its own color and lighting. We noticed how our own Navy ships tend to be simplistic below, along the hull, and more complex on the top surfaces, with clusters of towers and radars and antennae. So for the alien ships we inverted that ratio, simplifying the top surfaces, and then clustering detail — hoses, ports, cargo doors — onto the underside.

Another feature of the ships is their « intelligent surface ». We hypothesized that the alien technology allowed for data and energy to travel along the outer surfaces of their ships. This helped bring the ships to life.

Have you developed new tools for the water?

BATTLESHIP presented a host of CG water challenges. Not only do the alien ships breech up out of the ocean, and leap around the ocean surface, but they are designed to constantly recycle water — pulling fluid up via hoses, and then cascading it back out though water ports. This constant flow of water becomes a major component of the Stinger’s character. Further, since many of the ships get sunk, the destruction had to be coupled with our water simulations, so that fractured pieces of an exploding ship had to splash down into the surrounding CG water.

It became clear early on that we were going to have to take it to the next level. So, in 2010 we started the ‘Battleship Water Project’ — and over the course of a year we reengineered how we tackle large-scale fluid simulation and rendering at ILM. Considering our system at the time had been honored with a Sci-Tech Award from the Academy just a couple of years earlier, we didn’t make the decision lightly.

Our goal was to fully represent the lifespan of a water droplet. So if we are recreating a cascade or waterfall, the water begins as a simmed mesh, with all of the appropriate collisions as it bounces along various surfaces. And then the streams begin to break-up into smaller clusters, and then into tiny droplets, and finally into mist. And along that evolution from dense water to mist, the particles become progressively more influenced by airfields.

The movie features a large number of explosions. How did you create these and were they full CG or did you used some real elements?

For explosive ship destruction, we watched hours of naval war footage, and collected videos of Navy sink exercises, where decommissioned ships are used for target practice. Our research indicated how diverse practical explosions and smoke could be. We strove to emulate that diversity in our FX work, layering fast, popping explosions with slower gas burns; mixing pyroclastic black smoke with diffuse white wisps. We relied heavily on ILM’s proprietary Plume high-speed simulation and rendering tool for generating these effects, and employed our new Cell method for combining multiple Plume simulations into one combined volume.

How did you create the impressive explosion (with its shockwave) shot inside the boat?

When a Regent peg lands on the deck of a Destroyer and detonates, its energy travels downward, and through the corridors of the ship. During preproduction, Pete challenged us to find an interesting sci-fi spin for this shot. We theorized that a peg weapon was under such extreme pressure, that its inflationary blast could create a zero-gravity bubble, pushing and warping everything as it expanded. The in-and-out motion came from referencing underwater explosions, which tend to collapse inwards from extreme pressure.

To achieve the effect, we designed a vertical corridor, where the far end of the hallway rested on the floor of a soundstage, and the rest of the hallway rose up to the stage’s ceiling. Three stunt men, in Navy uniforms, were pulled quickly on wires up past the camera. We enhanced the shot with CG energy and debris.

The climax features a super long continuous shot. Can you tell us more about its design and creation?

There are a number of complex scenes in BATTLESHIP, but the biggest, most complicated VFX shot in the movie is one we nicknamed the “You Sunk My Battleship” shot. We planned the convoluted film shoot over the course of a year. In pre-production, we designed a set-piece representing the middeck of a sinking Destroyer. It was constructed on a floating barge anchored off the coast of Hawaii. The shot follows the journey of the movie’s heroes, Hopper and Nagata, as they climb to the stern of a sinking ship, while about fifty sailors jump off into the ocean. The resulting shot, lasting almost three minutes, is one of the most complex in ILM’s history.

There are other VFX vendors on this show. How did you distribute the work among them?

Image Engine worked on some of the Thug sequences. Scanline LA destroyed Hong Kong, and provided the weather dome, and additional shots at sea. And The Embassy worked on many shredder sequences.

What was the biggest challenge on this project and how did you achieve it?

When ILM began this project, we realized that with the current state of our toolset, we would never be able to simulate and render all of the water scenes — there simply wasn’t enough time. So it was crucial that the Battleship Water Project provide some game-changing technologies. One of these turned out to be multi-threading – simulations that once took four days, we could now turn around in hours.

How long have you worked on this film?

Three years.

How many shots have you done?

Over a thousand.

What was the size of your team?

Roughly 350 spread across a number of facilities.

A big thanks for your time.

// WANT TO KNOW MORE?

– ILM: Official website of ILM.

© Vincent Frei – The Art of VFX – 2012