Richard Baker began his career in visual effects as compositing artist. He worked on projects like THE HITCHHIKER’S GUIDE TO THE GALAXY, SUNSHINE, ANGELS & DEMONS or CLASH OF THE TITANS. He then got interested in the stereoscopy and joined Prime Focus World. There he take care of movies like FRANKENWEENIE, WORLD WAR Z or GRAVITY.

What is your background?

I’ve worked in VFX for many years – initially as a compositor at Framestore and Cinesite and then as a compositing supervisor at MPC in London. When the opportunity came to help set-up the stereo conversion division of Prime Focus World in London, the challenge and opportunity of setting-up something new, and working directly with the studios and directors, was appealing. Since joining I’ve supervised PFW’s conversion work on GRAVITY, WORLD WAR Z, HARRY POTTER AND THE DEATHLY HALLOWS, FRANKENWEENIE and many more.

How did you get involved on this show?

Very early on, PFW were approached about getting involved on EDGE OF TOMORROW for Warner Bros. Doug Liman came into the studio and I showed him some examples of our work – I’d read the script by that point, and its quite a unique story, so its always hard to get something exactly as the director imagines its going to be, but it was good to show him a representation of our work – outdoors, indoors and complex VFX sequences.

How was the collaboration with director Doug Liman?

The collaboration with Doug was great. We were the exclusive conversion partner on the movie, so we handled every shot of the film, working closely with Doug and show stereo supervisor Chris Parks.

What was his approach about the conversion?

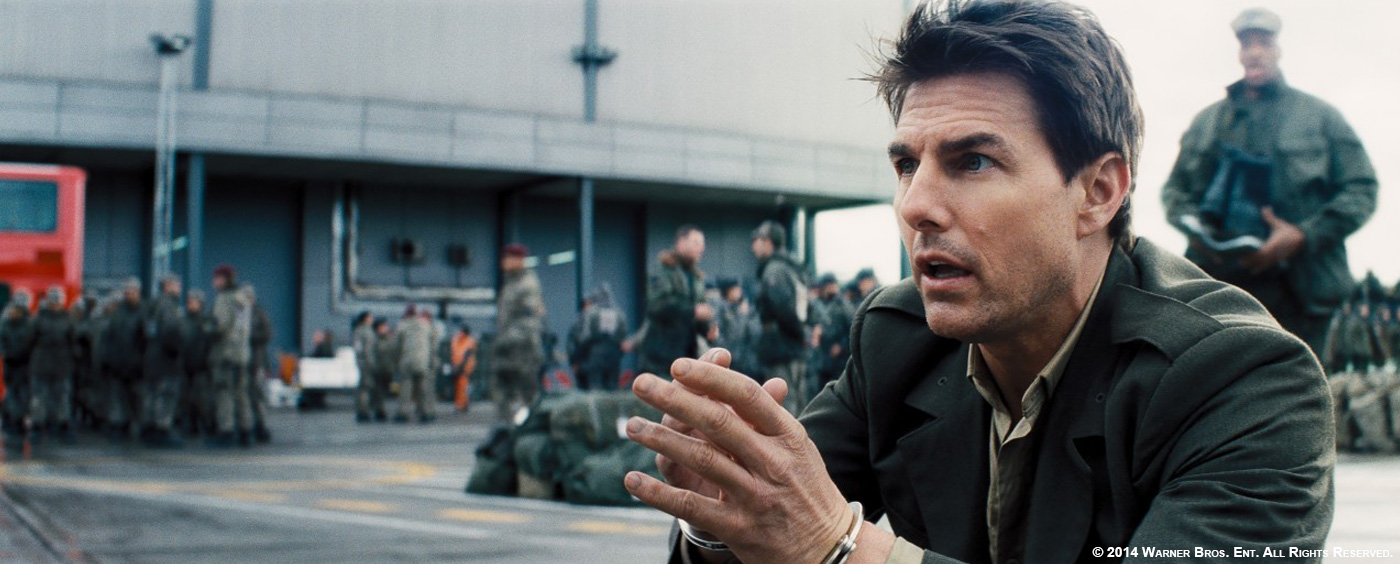

For him it was all about looking real. That was his key line – I think for him as a director anyway, but more so on EDGE OF TOMORROW. It shows in the mech-suit design – its not CG, its very much designed and practical and he’s very keen to have that come across in his movies. With Tom doing all his own stunts it fits together very well. He doesn’t want anything that is going to distract from what you are seeing, and the 3D needs to have an impact. For Doug it is very much a part of the package of this film, along with the action, the VFX and everything else.

How did you work with the VFX studios for the assets sharing?

Depending on where they were in their deliveries, we received elements from the VFX studios for some of the scenes in the movie. Cinesite in London and MPC in Vancouver were particularly helpful in sharing elements for the various scenes they worked on.

Did you receive specific indications for the stereo?

The brief was accuracy, realism, and to make sure Tom looks great in stereo. Chris Parks, who comes from a native stereography background, was also keen to ensure we got as much detail as possible into characters and environments – especially highly sculpted and accurate faces. With the new tech we have developed in London, we were able to help Chris present stereo to Doug and the studio at a quality that hasn’t been seen before in a live action film.

Can you explain step by step the geometry mapping techniques?

We use cyberscans of our main characters’ heads to ensure accuracy and consistency of depth for their faces. With a star like Tom Cruise, and this being his first 3D film, this is an area that you have to get right! We did over 900 shots with head geo – it was only the shots where the characters are quite far away from camera that it was not necessary to use it. Tom looks fantastic in stereo in this movie; when I was in the DI with Chris Parks we both looked at each other when we saw one shot of Tom – because it stands out as being a fantastic shot of Tom in stereo. He’s kind of just lingering and looking at the camera for a moment, and its super clean, and you wouldn’t be able to get that just through normal sculpting. Our resolution and polys are quite high for all the close-ups on characters, and we animate mouths and features. This really translates well on the big screen. When I was at Warner Bros for screenings a few months ago, Chris DeFaria’s comment was how fantastic Tom’s features looked, and that’s one of the key areas for me, which is why I have been so behind pushing use of head geo as a company on all our projects.

Can you tell us more about the stereo camera generation tool?

We had identified a requirement to incorporate linear CG assets into our non-linear converted sequences on GRAVITY. In order to achieve this, we needed a way to automatically create a virtual stereo camera pair that would render CG assets with exactly the right amount of depth for a given slice of depth in the scene. This virtual rig had to be dynamic to allow for changes to stereo values over the duration of the scene and according to the object’s movement through the scene, within parameters prescribed by the stereography team. Our R&D team came up with a plug-in that we call ‘Auto Stereo Camera Generator’. This tool automatically generates stereo camera pairs from hand-sculpted disparity maps to produce a virtual rig that will work in any CG or compositing environment. This allows us to ‘passback’ cameras to VFX vendors, so that they can render CG assets that will sit perfectly in our converted scenes.

How did you manage the challenging elements such as the holograms?

We had a lot of interaction with Nvizible on the hologram scene – their VFX Supervisor Matt Kasmir is a friend of mine, so a good relationship was already established. We used the stereo camera passback process that we had developed for GRAVITY – took the plate, converted it, created a camera and passed that back to Nvizible. They ran their CG holograms through the new eye and passed back a right eye of layers for the CG holograms, which we composited here at PFW to finish the shots. It meant they didn’t have to go through the in-house stereo approval process, they just had to render it, run it through the grading and glows and everything, break out the elements that had already been set up for the mono version, and pass it back to us. The process worked really well.

Can you tell us more about the particles creation for FX like explosions?

We added FX and particles to a number shots, whether it was rain, debris or dust. There are a lot of explosions in EDGE OF TOMORROW and we modelled, lit and comped in debris flying towards camera for some really nice 3D moments. This is a real advancement in conversion and means shots don’t need to go back to the VFX house for any additions.

For the beach sequence especially, there were some things that were difficult to get in conversion – mainly foreground elements that have separation and need a clean look to them – so we were able to get that through our particle teams adding splashes or layers of foreground mist. On the beach there are lots of explosions, sand falling in from out of frame – so there were a lot of shots that we put layers of smoke, dust and debris in, to create this extra foreground with cleaner separation.

Another moment we added particles for was when the helicopter explodes, and crashes into the barn. We built and animated parts of the barn, the helicopter exploding towards camera, and car coming through a brick wall. Not necessarily gimmicky moments, but adding to the feel of the 3D. Its quite difficult to get these looking exactly right in just conversion alone, so I think our process has really evolved from just being a conversion pipeline to now incorporating elements and geometry. Also having the particle and FX teams contributing to the way a shot looks in stereo allows us to deliver better-looking, higher quality stereo than anyone else.

How did you split the work amongst the various Prime Focus offices?

The work was split between our London facility and our Mumbai facility.

What was the biggest challenge on this project and how did you achieve it?

I think the bunker sequence, when we did the holograms. I really enjoyed that because it was a challenging software development / creative approach and the end result looks great.

Was there a shot or a sequence that prevented you from sleep?

Not really… the only thing that prevents me sleeping is running a show across 2-3 time zones and having dailies at 3am!

What do you keep from this experience?

EDGE OF TOMORROW represents an evolution of our conversion process – and a formalisation of our R&D and tool development – to ensure that we create the best possible stereo. On WORLD WAR Z we concentrated on head geo to ensure that Brad Pitt looked perfect in stereo. We finessed these techniques on GRAVITY, developing the use of head geo further and exploring the use of LIDAR to accurately build the capsule sets in depth. What we are seeing on EDGE OF TOMORROW is the culmination of years of R&D and practical stereo experience, advancing the use of LIDAR, cyber scans and VFX elements further than ever before. We use the geometry to create a consistent starting point that we can then creatively manipulate, giving the filmmaker unlimited creative control over the stereo.

What is your next project?

We’re currently hard at work on TRANSFORMERS: AGE OF EXTINCTION and SIN CITY: A DAME TO KILL FOR – and a superhero movie that we can’t mention yet…

What are the four movies that gave you the passion for cinema?

JASON AND THE ARGONAUTS, TRON (1982), BACK TO THE FUTURE and POINT BREAK.

A big thanks for your time.

// WANT TO KNOW MORE?

– Prime Focus World: Dedicated page about EDGE OF TOMORROW on Prime Focus World website.

Edge of Tomorrow (Close Up) from Prime Focus World Official on Vimeo.

© Vincent Frei – The Art of VFX – 2014