Matthew Bramante started his career in visual effects in 2007 at Digital Domain. He has worked on many shows such as Real Steel, Iron Man 3, Lucy in the Sky and The Right Stuff.

Rob Price has been working in visual effects for over 10 years. He joined the Zoic Studios team in 2010 and has worked on shows such as RED, Priest, Once Upon a Time and The Haunting of Bly Manor.

What is your background?

Matthew Bramante // I went to film school at New York University, with a focus on cinematography and a bit of digital animation, so organically my career path fell between those two things in Visual Effects. After graduating I started working at Digital Domain right out of school. Initially I was in the commercial Flame department, then I moved into the features comp department where I worked on films including Real Steel and Iron Man 3 among others. I bounced around between various feature and TV shops as a compositor, 2D Supervisor, and VFX Supervisor, eventually landing at Zoic as a VFX Supervisor. While there, I have supervised the film Lucy in the Sky and the TV series adaptation of The Right Stuff.

Rob Price // I have a background in fine art studying at the University of North Florida and a degree in computer animation from Full Sail University with a focus in digital compositing. I entered the VFX industry as a digital compositor on films such as Red, Priest, and Twilight. I then moved into episodic television and became a VFX Supervisor in the third season of Once Upon A Time. Most recently, I was VFX Supervisor on The Haunting of Bly Manor and Sweet Tooth.

How did you get involved on this show?

Matthew Bramante // The project came up as I was in the final days of The Right Stuff. Zoic had done the work on the pilot for Sweet Tooth and they were looking to send a supervisor to New Zealand for the shooting of the rest of the series. They set up a meeting for me with Showrunner and Director Jim Mickle that apparently went well, because about two weeks later, I boarded a plane to fly down to Auckland.

Rob Price // I was brought onto the project by Zoic at the tail end of shooting during the pilot, to supervise the work done in post.

How was the collaboration with the showrunner and the various directors?

Matthew Bramante // Fantastic, Jim and all the directors were great to work with. They were really open to exploring ideas and collaborating to find the best ways to achieve any given effect. Jim in particular has a great sense for what he wants, and is very trusting of the department heads to explore the best approach. Some of my favorite times on the show were working with the art or special effects department to figure out a particular approach to a scene.

Rob Price // Working with such a creative team was amazing. Jim, Beth, and all our directors had such a great vision for the show. Utilizing newer techniques such as LED screens for some environments meant that we needed to be involved in production much earlier than traditional VFX post work – we are not typically producing final pixel imagery while we are in prep. Being able to collaborate on scenes like the Jeppard/Last Men fight during the lighting storm was an amazing new experience. I cannot speak highly enough about working with Jim, Beth, and the entire post team for the show. It was a great collaboration.

What was your role on this show?

Matthew Bramante // I served as On-Location VFX Supervisor for Sweet Tooth. On this show we used LED walls to essentially do real-time, in-camera compositing which required a lot of time sensitive plate shoots and even some fast on-set compositing to get elements up on the wall by the time needed to point a camera at it. This was something that was on my plate during the course of the show. The real-time nature of the effects on this series made for a pretty busy show for myself and the whole New Zealand VFX team.

Rob Price // Visual Effects Supervisor, responsible for the overall creative and technical direction of final VFX work.

How did you organize the work with your VFX Producer?

Matthew Bramante // Because the show was all done by one house, Zoic, the VFX producer being Danica Tsang, there wasn’t the need to do the typical multi-vendor organization. Danica or Rob can better speak to how the show was organized through post.

Rob Price // Danica Tsang and I have collaborated since 2013 on numerous projects. Danica’s primary responsibilities focus on budget and scheduling, while I focus on technical process and final image approval. We have been doing this together for a long time and we are very much a team; everyone has a voice.

What are the main challenges with a post-apocalyptic series?

Matthew Bramante // This was an area where we in VFX worked closely with the Art Department. When it came to the city exteriors, there was always a bit of a dance we would do with the art directors, finding places to maximize the practical dressing in a given location. The goal of course was to use the dressing for our foregrounds and then use VFX to really sell the scope of any given shot by adding plant growth and destruction to the backgrounds and tall buildings.

One thing we were always looking to do was reinforce the idea that the world was being reclaimed by nature. So location-wise we were constantly looking for naturally overgrown parts of the city, that way when we added extra plant growth and destruction it would build upon what we had in the natural location.

Rob Price // We are extremely fortunate to have such great practical scenery to work with on this show, along with great on-set work across all departments. We had a lot of back and forth between departments to figure out what we could all bring to the table. For this story it was particularly important to find the threshold of where nature is taking back our world versus going too far where our world is completely gone. There is a balance there and I think we all nailed it.

What kind of references and influences did you received to create this world?

Matthew Bramante // Nick Basset and the art department put together great look books for each location on each episode to mockup the level of destruction and plant overgrowth they were looking to achieve. Often, on a scout, the directors would take stills to plan out their shots and send them right to the art department to start planning their dressing needs. As part of that process, the art team would mock up the whole frame and then we would begin discussing what could be done practically versus digitally. It was a much more focused and specific way of handling the concepts, rather than referencing other films or art, we were actually referencing our locations and tailoring the goals of the VFX all the way back in the early stages of pre production.

Rob Price // Our art department for the show has been phenomenal in their research and creation of look books for each episode. These had an amazing range of imagery that really set the tone for what we were going to be creating visually. We take cues from these for inspiration when designing things like the Animal Army Video Game or Daisy the Tiger.

Can you explain in detail about the environment work?

Rob Price // There are several different time periods, events, and locations in Sweet Tooth that we created environments for. Firstly, the immediate panic of the pandemic in the pilot required lots of in-progress devastation to add to the chaotic nature of the event. This was mostly adding matte painting set extensions and compositing elements of burning buildings, smoke/atmospherics, and additional CG crowds and vehicles that we animated, lit, and rendered out of Maya and composited in Nuke.

After the world has settled, our focus turned to the aftermath: adding what was left behind from the chaos. You can see this most clearly in the scene where Aimee encounters the herd of elephants. The city set extension is all about how we have just abandoned our world. We began with plate photography and LIDAR scans of our Auckland city street location. Using Houdini, we procedurally build our cityscape, focusing on replicating the feel of new and old construction in modern mid-western cities. One of the environmental challenges for us was making New Zealand feel like Colorado. Within the city we then scattered debris, cars, foliage, and simulated atmospheric fog. Doing this all in a single package allowed us to have more dynamic interaction with our CG elephants running through the scene.

Later we see nature reclaiming the world, and that brought our focus to breaking down and adding vegetation to structures. We developed tools inside of Houdini to better grow vegetation more naturally. Being able to procedurally grow vines, flowers, and any plants we needed allowed us the creative flexibility to quickly iterate on environment work and add overgrowth, whether that was a city street, guard tower, or train bridge.

Our other challenge was recreating practically shot environments, so that we had more flexibility for some sequences. Most notable were the train sequences in episode six: these were a combination of all CG environment shots, practically shot blue screen elements/plates, set extensions, and CG train. We used our location shoot as reference and duplicated the terrain and plant life within Houdini and Maya. Some of this was for expanding scope and creating elements like tunnels and mountainsides, and some of this was to allow for crafting more dynamic camera moves that really make these sequences unique.

How did you manage the creation of the foliage and vegetation?

Rob Price // One of the most important aspects for me was getting a natural feel for foliage. Things like vine growth are the most challenging. In a lot of off-the-shelf vegetation packages, you can spawn and grow vines; however, they were not accurate enough without a lot of manual manipulation. We developed tools in Houdini so that we could procedurally grow vines over and around objects. This allowed us proper growth and branching, as well as object avoidance. As a result, we were able to control every aspect of how it grew. Over the series we expanded this tool for other vegetation like flowers and grass. We were able to use this process for expansive set extension work as well as covering practical set pieces. Our use of LIDAR on the show enabled us to have a 3D version of any set we needed in post.

Which location was the most complicate to create and why?

Matthew Bramante // I’ll let Rob speak to this, but I suspect the Valley of Sorrows from 105. It was certainly the most complicated to figure out a shooting plan for. Considering we had an ext location for the cliff edge that we only had very limited access to, a partial stage build of the rope bridge on blue screen, a second location for the valley floor, and a digital valley environment that all needed to work together, it was tough to plan.

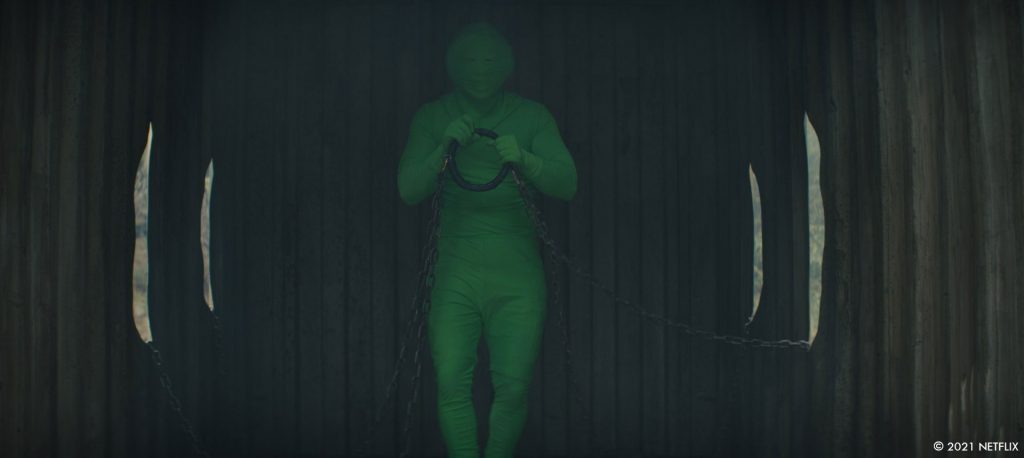

Rob Price // The Valley of Sorrows rope bridge scene in episode five was one of the more complex environments created for the show, and relied heavily on VFX to bring it to life. This had several elements to it: a small section of bridge was built and shot practically on a sound stage, and there was also a valley shoot on location. We needed to extend and duplicate both of those sets while covering the entire valley in millions of flowers. We digitally duplicated and extended the rope bridge several hundred feet, which required the bridge to be match moved and dynamically simulated in all of the shots to match the movement of the practical bridge from the actor action. We again LIDAR scanned and took extensive reference photography of the valley on location, duplicated it, and used our vegetation growth tools to cover the environment in the iconic purple flowers.

What was your approach to create the hybrids?

Matthew Bramante // Much of the hybrids are practical with digital sweetening, things like a digital articulating a Monkey tail or a Chameleon’s eyes were added to the practical work done by Stef Knight and the makeup team to really sell the effect. Beyond the normal hybrids, we had Bobby, the small toddler size hybrid that began as an animatronic puppet. The puppet, built and puppeteered by the team at Fracture FX had a huge amount of articulation, but ultimately received quite a bit of digital work ranging from a full CG version in some shots to face augmentation and warping to help with lip sync and mouth articulation, eye blinks, and facial expression.

Rob Price // The majority of the hybrids were practical special effects makeup, costume design, and puppeteering. We added some additional digital prosthetics like Lizard Boy’s chameleon eyes or Monkey Girl’s tail. We did a lot of movement in post for noses and ears to give them additional life; these were all composited elements we extracted, warped, or animated, and rebuilt from the plate elements. A lot of care was given to subtlety so that we weren’t ever distracting, but adding, to the emotional impact.

A lot of our hybrid work was focused on Bobby, which was a practical puppet. We were responsible for adding layers of movement to his eyes, nose, cheeks, and lip sync to add to the final performance. We also created a completely CG digital double of Bobby for his more athletic moments. It was important to emulate the performance from the puppeteers in our CG double; we wanted Bobby to always feel like the same character, irrespective of whether he was practically filmed or digitally animated. We studied and referenced their performances to really sell the seamless work. Bobby also had several wardrobe changes, meaning we had to create five different versions of Bobby, all with fully dynamically simulated fur and clothing.

How did you work with the SFX and makeup teams for the hybrids?

Matthew Bramante // As with anything it began with what was in the script, some of the hybrids were specific, some were more generic. For the specific ones, Gus, Wendy, and Bobby for example, the Fracture FX team designed and built specific appliances or remote controlled elements (like Gus’ Ears), and for the most part any digital augmentation on those was decided once there was an edit. As for the more generic hybrids, Stef and the makeup team would do photoshop mockups, and then we would have a conversation about what was doable practically versus what would need digital help to achieve. We definitely had plans for more digital enhancements than what ultimately made it into the show, so there may be more to come in a potential future season.

Rob Price // Our primary role was a supporting one – adding layers of life on top of the practical SFX and makeup. Some elements not achievable with animatronics, such as Wendy’s ears and nose, we animated on top of the SFX makeup for specific beats or emotions. Gus’s ears needed much less help from us as the animatronics were able to take on most of the heavy lifting.

We created Baby Gus’s antler nubs and ears, and Baby Wendy’s nose and ears, as digital prosthetics that we match moved and animated onto our child actors. We started our digital sculpts based on the SFX makeup sculpts for Gus and Wendy when they were older, and ensured that they seamlessly matched as the children aged.

Bobby as a digital double required a lot to match the practical puppet we filmed with. As the team at Fractured FX created Bobby, they would send us the digital sculpts and scans along the way so that we could create our CG version to match. Building assets in tandem made the final result much more achievable, as many of the same underlying mechanics – rigging, muscle, skin, fur, and clothing – all build up in a similar way, whether it be done practically or digitally. The digital Bobby needed to match one to one with the practical version because of how the two intercut in the same scenes. Our digital asset, lighting, and compositing had to be very precise.

Can you explain in detail about their creation especially in their baby version?

Rob Price // There were two main scenarios when it came to the baby hybrids in the show. In the first case, we have digital prosthetics for Baby Gus’s antler nubs/ears, and Wendy’s nose/ears. This required duplicating and match moving the baby’s head movement, adding the digital elements for each, and then adding layers of animation, lighting, and compositing.

In the second case, it’s the opposite of what you might think. In the pilot, the iconic babies in the maternity ward shots are practical puppets. These were complicated puppets requiring up to four puppeteers each, and the shots required extensive plate reconstruction. To solve this, we digitally recreated the entire room, including the bassinets the babies are lying in. So, in many of these shots the only thing in frame that’s not VFX are the babies. No one would question it, and it’s one of my favourite elements from the pilot.

How did you handle the Gus ears and eyes?

Matthew Bramante // On the VFX side, these are all comp enhancements. We had played with the idea of doing something practically for Gus’ eye shine, but ultimately decided to shoot it clean to allow for the maximal flexibility in post.

Gus’ ears are a remote controlled practical effect to start. Grant Lehmann, the Puppeteer would operate them remotely during each take, working to capture the specific reaction or emotion Gus was experiencing. From there, some scenes required specific timing or a specific action and in those cases, the team at Zoic would warp or replace the ears to do what the specific shot needed.

Rob Price // Gus’s ears are primarily remote-controlled animatronic ears filmed in camera. We help when we need specific beats to add a little more emotional emphasis on top. We achieve this in 2D by isolating the ears and adding animation and warping; then we recreate the plate behind the ears that is now revealed by the new animation. For the eyes, we referenced practical animal eye reflections; we found that we had to give it a little extra enhancement in order to give them an unsettling vibe that Jim was looking for. These were animated and composited all within Nuke.

The series is full of animals. Can you elaborates about their creation and animation?

Rob Price // The animals in the series were a lot of fun for us. We created everything from a doe, to a herd of elephants, Daisy the tiger, Gus’s protective stag, a herd of zebra, giraffes, and a few insects here and there. We always start with lots of reference and concepting, either from art department or internally here at Zoic. There was always a lot of discussion with Jim and the directors about what we were really trying to achieve with the animals. They were always there for a reason; it’s never random. We then modeled, sculpted, textured, and shaded inside of Maya, zBrush, and Substance. We used Yeti for the fur grooming and simulations, along with Ziva for our dynamic physics-based character simulations, which was important for a high level of detail as many of our animals had to perform and hold up extremely close to camera.

For several sequences, such as the elephants running through the city, we created detailed previz in pre-production using Unreal, and worked closely with Jim to plan out the entire sequence. This really helped once we got into post, because we already knew how we were going to be using our elephants in shots, and could then target what details would be important. Having the animals so close to camera meant that the primary focus of our animation team was to accurately reflect to real-world kinematics.

How did you create and animate the beautiful vision told by Gus father?

Rob Price // We started extremely early concepting this during the pilot, creating detailed artwork and then animating previz for the sequence. This was one of the biggest conceptual challenges of the pilot. We simulated steam emitting from dozens of animated characters amidst atmospheric steam. It was a deceptively difficult task to be able to read these figures in this environment, because they were always changing and needed to stay dream-like, but still clearly tell our story. Playing with negative and positive space was key, with light shapes always changing or being obscured. Each shot was its own challenge to find the right balance.

Can you explain in detail about the creation of the train?

Matthew Bramante // There are three categories of train shots, interiors, exteriors, and stunt shots when the gang jumps onto the train.

For the interiors we used LED walls in a variety of configurations to cover all the windows, doors, vents, and any other opening. Before we shot anything in the train set, we had a team go down to the South Island to get plates. First, we had to find the right stretch of road that would feel like a train track, either straight and empty or with slight curvature, and empty. Empty was pretty important, as a car driving through a plate would kind of ruin it. Then, we would shoot what we called the “slices of the pie,” 8 angles that made up all the possible views we might need. For the most part, we would shoot these from the back of a truck with the camera on ronin head on a black-arm to keep it stable. However, in certain places, like the area around where they jump onto the train we could shoot that with the drone which was a great way to pick up the plates but had some limitations on the distance we could run the drone and thus length of the plate.

Once we had plates, we made selects as to which ones would play for which scenes. Then it was just a process of lining them up for each shot. Olivier Jean, was the LED wall operator and handled playback of the material on the wall. Olivier and I would watch each setup and tweak the plates in real-time to suit the particular angle. We even needed to use the LED wall to act as an extension of the train as viewed out the door between cars. On the first morning of shooting with the train-car set we shot a plate both with the door between cars open and closed, and then we comped that onto a traveling plate to be seen whenever someone opened a door to a car that wasn’t there, essentially faking the length of the practical train. This again needed to have special attention paid to things like the horizon line to make sure the angle worked for the particular shot, and we would adjust accordingly. In a few cases, we would put blue on the LED wall and shoot it clean with the knowledge that we needed to add the BG in post.

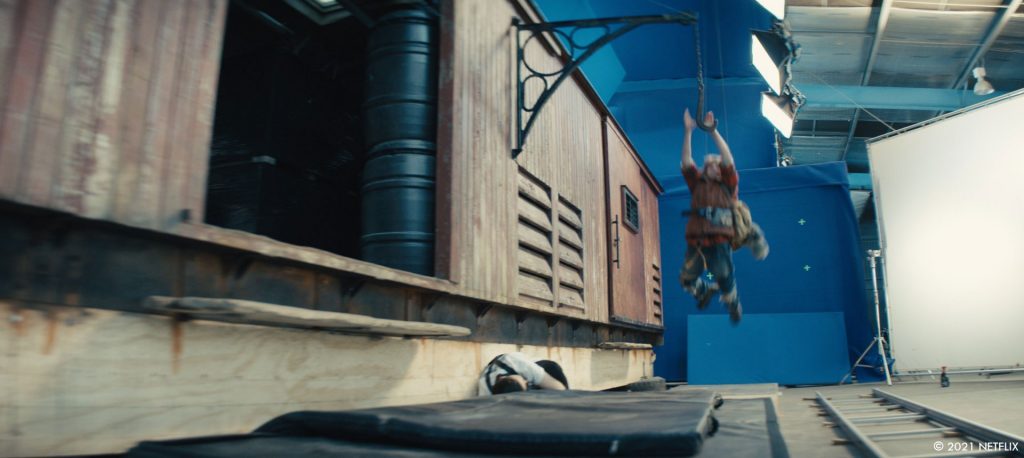

As for the stunt work, there’s also a combination of things happening. All the stunt performers for the wide shots are shot on location on the South Island, all the actors are shot on stage in front of LED walls while running on treadmills. In any wide shot where a train exterior is visible, it’s a CG train.

And finally, the exterior shots of the train, these are plates acquired around the South Island with a CG train comped into them, most notably the river crossing. This was an old train bridge in Luggate on the South Island. We took a small plate unit much like our other LED wall plate unit but with a drone team out to shoot a series of plates. For POVs or views looking out the door of the train it was shot in the same way as the other train LED plates. For the wides of the bridge itself, the Drone was used to acquire those plates, with the train added later in post.

Rob Price // We began in prep creating previz in Unreal to plan out how the sequence would come together with Jim. For shots where the actors are inside the train and looking out at the landscape, we utilized LED screens with playback from drone footage that production shot in New Zealand. For those interiors and some close exteriors shots, we had a couple of box cars built on stage. We duplicated these train cars using LIDAR scans and reference photography for use in wide establishers and set extension shots.

Our steam engine started as a LIDAR scan of a practical engine used on-set in episode three. For episode five, we added additional weathering, damage, and makeshift fixes to it in order to sell the idea that it needed more and more non-train parts to patch it up and keep it running during the apocalypse. A hand full of shots, like when Gus jumps into the open box car, required a lot of different elements to all come together: we had a practical box car on a blue screen stage with our stunt performers swinging into the car; CG antlers added for safety reasons during stunts; CG train and undercarriage extensions; simulated smoke elements; and a full CG environment for traveling by camera. This was a complex shot that was well planned out and came together nicely.

Later in the series, there is a massive fireworks. How did you create and animate it?

Matthew Bramante // I’ve always believed in the importance of doing as much practically as you can, even if it ultimately needs to be replaced, because it informs how the digital version should look on camera.

Keeping with that, much of the pyro on the ground is practical. When we first started planning the sequence, the Zoic previs team did a great visualization of the scene. While that was being worked on, Steve Yardly and the SFX team started building and testing pyro that could be set off next to the stunt performers. His team built a really awesome mix of fireball, pyro charges, and cold sparks to create a really chaotic moment.

Once the previs came in, it had a really interesting element of falling sparks, as soon as we saw it we wanted to find a way to get the same practical effect. Once again, Steve and the SFX team had an answer. The one drawback with the falling sparks effect was that it was too hot to be anywhere near the performers. So for that we layered in falling sparks where it was safe to do so and shot elements in frames clean of the performers so we could add more to the scene later.

As for the fireworks in the sky, the large “star shells,” we knew those had to be digital. In fact the whole sky had to be digital, as this scene was shot on a stage. So once again, we set out to find some elements we could shoot to help. 50ft pyro charges were our answer. Again, we had the previs and some storyboards drawn up by Jim to guide us in the planning. We picked a few of the large moments when we were looking up. On a separate element day we lined up the shots out in an empty parking lot and set off large 50ft pyro charges in a variety of colors and styles. We also shot a handful of falling park elements.

As for how that was all put together with digital fireworks, I’m sure Rob and the Zoic team can elaborate on that.

Rob Price // The fireworks sequence is another example where we began with previz inside of Unreal, working out the broad strokes and scale of the sequence. There were practical fireworks elements in the plates for us to build off of. We expanded on these by adding additional elements simulated in Houdini and composited inside of Nuke. We needed many of these to be shot specific and to interact with objects in frame. Utilizing LIDAR scans of our sets, we were able to recreate these environments for collisions and light interaction. Timing was key as many of the fireworks needed to be timed and animated to interact with stunt work in shot.

Which sequence or shot was the most challenging?

Rob Price // Some of the title shots were the most challenging. For example, the title shot of the first episode starts on a plate of Dr. Singh looking into the maternity ward. There is a hand-off from the practical camera to a digital camera so we can travel out through a sheet of glass, leave the room, and transition from 2D clouds on the walls to 3D clouds in the sky; there are then three different drone shots stitched together to create the summersault movement from the camera; we eventually stitched that into another crane shot down to Pubba and Gus. This shot had some of the most complex retimes, plate stitches, and 3D camera takeovers I have done, mostly because it looks deceptively simple as a final shot.

How was the collaboration between you?

Matthew Bramante // Great overall, Rob and the whole Zoic Vancouver team do great work.

Rob Price // Working with our New Zealand On/Offset supervisors Matt, Jacob, and Pania was fantastic. A show this size does not come together without a village pulling together and everyone working tirelessly to make it all happen. I can’t thank them enough for what we all created together for the VFX.

Is there something specific that gives you some really short nights?

Rob Price // We had some tough deadlines, but being involved in prep as well as post meant that we were able to anticipate the requirements for each episode. Some of the later episodes were compressed time-wise, but our team handled it amazingly.

What is your favorite shot or sequence?

Matthew Bramante // In episode 102, there is a shot where we show Aimee’s life during the early days of the pandemic, as a single time lapse camera move. That was a crazy full day of shooting. Zoic had prevised the move with which we used to plan out the day/night and object movement/buildup, but ultimately on the day we had to improvise a bit.

First off, the whole thing was built as a single motion control shot. The plan originally was to do a sort of stop motion linear straight ahead dressing of the scene. The shot would start as a shot of Aimee during the day in a clean and empty office, then progress through day and night changes, showing Aimee in different spots in the room as more and more detritus builds up as she lives in that one room for months. On the day we started by shooting a clean plate of the room, then started shooting Aimee’s particular sections. Because we had Aimee in there, we had to figure out the timing of the sun movements and plan them out through the shot. After all of Aimee and the cleans were done, it was clear there was no way we’d have time to do a straight ahead stop-motion pass of dressing the room. So we settled into doing multiple different dressings of the room to give us the maximal flexibility in post. 60+ passes later and we were done with the interior of the room.

Next we needed a solution for the city outside the window. There was a little part of Auckland that we selected that was generic enough to play as a sort of any-city, and there was a great spot on a nearby parking structure that gave us the perfect view of the city as you would see from up in Aimee’s office. So we sent a Timelapse photographer out there to capture a full day to night cycle. This acted as our basic exterior plate.

Rob Price // I am particularly fond of Daisy the tiger. We spent a lot of time developing the tiger asset and I am extremely happy with how she turned out.

What is your best memory on this show?

Matthew Bramante // All of it was pretty great, you can’t ask for a better place to shoot than New Zealand. From a locations perspective, everything is there, and really close together. You have mountains next to fields, next to ocean and beaches and prehistoric forests, and nowhere is that more the case than on the South Island. And the South Island shoot was definitely my favorite memory of the show.

We spent about a week and a half on the South Island between scouting and shooting. But I think the best part was shooting the train work in Kingston about 40 min drive south of Queenstown. Mostly we were shooting stunt work and then plate work for the 106 opening and LED wall BG plates. The day started off looking like it was about to storm with heavy clouds and a thick fog bank, but by the time we got our first shot off it had turned into a beautiful day. The whole splinter crew along with the drone team that was doing our plate work was awesome, and it was a really great day.

Rob Price // On the post side, it was very interesting how we all came together during the pandemic and completed the entire project working remotely. It provided some new challenges, mostly centered around how we all communicate. Our team really came together and figured out new processes and workflows for ourselves and maintained an extremely high bar for our work.

How long have you worked on this show?

Matthew Bramante // I was in New Zealand for nearly six months of prep and shooting.

Rob Price // I started on the pilot in the summer of 2019, so I have been on the project for 2 years.

What’s the VFX shots count?

Rob Price // 994 shots.

What was the size of your team?

Matthew Bramante // Our shooting team consisted of five. An on-set supervisor and data wrangler each for odds and even episodes, and an off set supervisor. Rob can better speak to the size and composition of the Zoic post team.

Rob Price // About 200 people.

What is your next project?

Matthew Bramante // Currently I’m on location for the filming of a show named Paper Girls for Amazon.

Rob Price // I am currently in postproduction on Midnight Mass and in prep/production on The Midnight Club for Netflix.

What are the four movies that gave you the passion for cinema?

Matthew Bramante // Jurassic Park, Apollo 13, The Lord of the Rings Films, Alien.

Rob Price // The Abyss, Terminator 2: Judgment Day, Jurassic Park, and Toy Story.

A big thanks for your time.

WANT TO KNOW MORE?

Netflix: You can now watch Sweet Tooth on Netflix.

© Vincent Frei – The Art of VFX – 2021