In 2017, Guy Williams explained the work of Weta Digital on GUARDIANS OF THE GALAXY VOL. 2. He talks to us today about his work on GEMINI MAN.

How did you and Weta Digital get involved on this show?

The filmmakers approached us early on to ask we felt it was finally possible to make a convincing digital human. This was about two years ago. We felt that we were within striking distance and that the time had come.

How was the collaboration with director Ang Lee and Overall VFX Supervisor Bill Westenhofer?

They are two of the finest people— I mean this sincerely. Ang is a genuine, kind soul (on top of being a world-class talent), and Bill is the same ? We rapidly built a strong working relationship based on collaboration. Once you get to that point, the work flows easily.

What were their expectations and approach about the visual effects?

Ang always has the same expectation—it has to be the best. He pushes people to do more and better than they might have planned for. As for the approach, they left a lot of that up to us.

How did you organize the work with your VFX Producer?

I was lucky to have Ben Pickering working with me on this one. He has a great and grand ability to create organization out of chaos. We worked hard at the start of the project to strategize the best way to manage our time to create the best possible result. We had many bidding and planning meetings in the beginning to make sure that we had a track to go on and that we stayed on it.

The tech specs of the movie are impressive (4K, 120 fps and native stereo). How does that affects your work?

It makes the work a lot harder, I will tell you. We knew this going in, so we were able to plan for it. We did an early end-to-end test where we created a “practice” shot and ran it through the pipeline to test where the pain would be felt the most, this prepared us for the actual production period.

Can you tell us more about the 120 fps challenge and especially about the lack of motion blur?

The lack of motion blur is an interesting one. It is the reason the image looks so sharp (more so than the 4K). As far as its effect on the process, that isn’t too bad. In a weird way, it makes some of the work a touch easier (easier to roto a sharp edge or track a camera that is moving fast). This, however, does not begin to offset the increase in effort for the 120 (5 times the number of frames to work to). The extra frames create a huge bump in time in paint, roto and camera. Other departments feel it also, but not quite as bad as those three.

How did you manage so many datas and especially for the render times?

Once again, through careful planning from the start. We made estimates based off the end-to-end test. From there, it is a simple matter of making a plan (now an educated one) and sticking to it.

What was your approach for the younger digital version of Will Smith?

That is a big question. It was one of our first early hurdles. We didn’t have access to high resolution photos or scans of the character we were making. We knew we had to get it right and couldn’t just wing it. In the end, we made a current day version of Will Smith and then we used this as our base. We « bent » that model to a younger age. We did this using stills from movies and photos to make sure we could get the right “shape”. We also studied the effects of aging to make sure that we were following a legitimate path.

How was filmed the sequences with the two Will Smith?

We called this an A-B shot. During shooting, Will would play the role of Henry and Victor Hugo (an acting partner) would play the role of Junior. At the end of the shoot, we set up a mocap stage in Budapest. From there, we flip the equation, A becomes B and B becomes A. In other words, Will is motion captured as Junior and Victor is standing in as Henry (although we played the audio from the original shoot of Will talking as Henry).

Can you explain in detail about the creation of Junior?

We start by making a version of Will Smith at his current age. We do this because we have the most reference of him at this age. It also serves as a good ground truth for all work that will be done on Junior. From here, we start modifying this version of Will to make him younger. We study all of the things that happen during aging over that age range and reference images and videos to see how those match with what we see has happened to Will. This is a very iterative process to make sure we get it right. Out the back end of this, we have a young Will Smith. I am glossing over all of the detail in the process like the procedural pore generation and simulation, the extended eye pipeline, the coloring of the skin using Melanin values absorbing wavelengths of light instead of just painting a color map and so on.

How did you create his skin and the various shaders?

Oh, ok, I see what you did there. Ok, so the skin. Skin is vastly more complex than just a color definition with a depth based scattering light function. For this show, we knew we needed to go much further towards the right result. We painted maps that defined the density of the two sub types of melanin that define skin color (Eumalin and Pheomelanin). The maps defined how much of each pigment was at each point on the skin, it also drove the absorption parameter for the pigments. This, combined with standard skin and blood, results in a very accurate color result for the skin. This was critical because dark skin has some very view dependent properties. If we didn’t do this, it would have looked more like painted latex instead of skin.

Can you elaborate on the Deep Shapes technology?

I can’t go too complex because that was some sorcery that the anim team came up with. The gist of it is that the animation system deals with motion in a somewhat linear matter (between certain shapes, the shapes themselves are designed to travel in non-linear ways when possible). This system allows the shapes to be ‘weighted’ to their depth in the skin (thus the deep part of deep shapes). Different parts of the skin can move in slightly different ways. A great example of this is that in a blink, the upper eye bag continues to move down for a frame or two after the upper eyelid has started to travel back up. The beauty of the system is that it is elegant enough to get this result everywhere (in other words, we didn’t have to make a ton of shapes just for the eyelid to get that result).

Did you use procedural tools for Junior?

Yes, we grew the pores on his face using a complex growth system. This high resolution mesh could then be simulated using the animation so that the pores collapsed properly along their grain lines.

Can you tell us more about the eyes creation?

Sure, We started with the great system for eyes that we have used for a few years now (proper materials for the iris, the sclera and the choroid). From there we took some high res stills of people’s eyes (and eyelids) to see why the eye still sometimes comes across as doll-like. We found there were a few more things we needed to account for. One, the eye isn’t a sphere, it is actually squished into the socket allowing it to fill the eye socket properly without having to modify the socket shape. Two, we changed the shape and shaders of the eyelids to make sure they had the proper soft tissue connection to the eyeball (this was a huge step). Lastly, we realized that coloration at the corner of the eye (outer corner that is) is a function of a tissue layer called the Conjunctiva. We accounted for this extra layer to the surface of the eye so we could get the proper coloration across the surface of the sclera.

Can you explain in detail about the Junior animation and especially about his face?

This starts with getting a good performance during mocap from Will Smith. Then the animation team runs that through the facial solver to get a good first pass at the motion of his face. This is where those animation artistry kicks in. The solve gets you a good start but it is by no means good enough to be done. The anim team looks at the reference cameras and helmet cam and starts to work on the differences. This isn’t just about looking for what is off. You have to almost look at what you feel. The differences we are talking about are insanely subtle. It is critical though, because being off by a 1/4 of a millimetre can be the difference between suspicious and concerned. To be faithful to the performance, you have to deep-dive hard into the success of the facial result.

How did you manage the lighting challenges?

Good planning, good tools and good acquisition on set. We captured the spectral profiles of all of the lights in the light kit (as well as the sun on each setup). We captured an extended HDRI of bright light sources (allowing us to get 24 stops of light instead of the standard 12 stops). When then made sure that our tools supported this extra information (or renderer is spectral but we had to extend the light kit to make sure it passed spectral profiles to the renderer).

Which shot or sequence was the most challenging?

Don’t think that any one shot fit this bill. Any shot that had very subtle performance was hard to do. We found that if you get even one shape in one part of the animation wrong, it brings the whole result down. Likeness was very fragile in motion. One of the animators described it by saying that likeness was a razors edge that we had to always stay on.

Is there something specific that gives you some really short nights?

Umm, the circus in Washington?

What is your favorite shot or sequence?

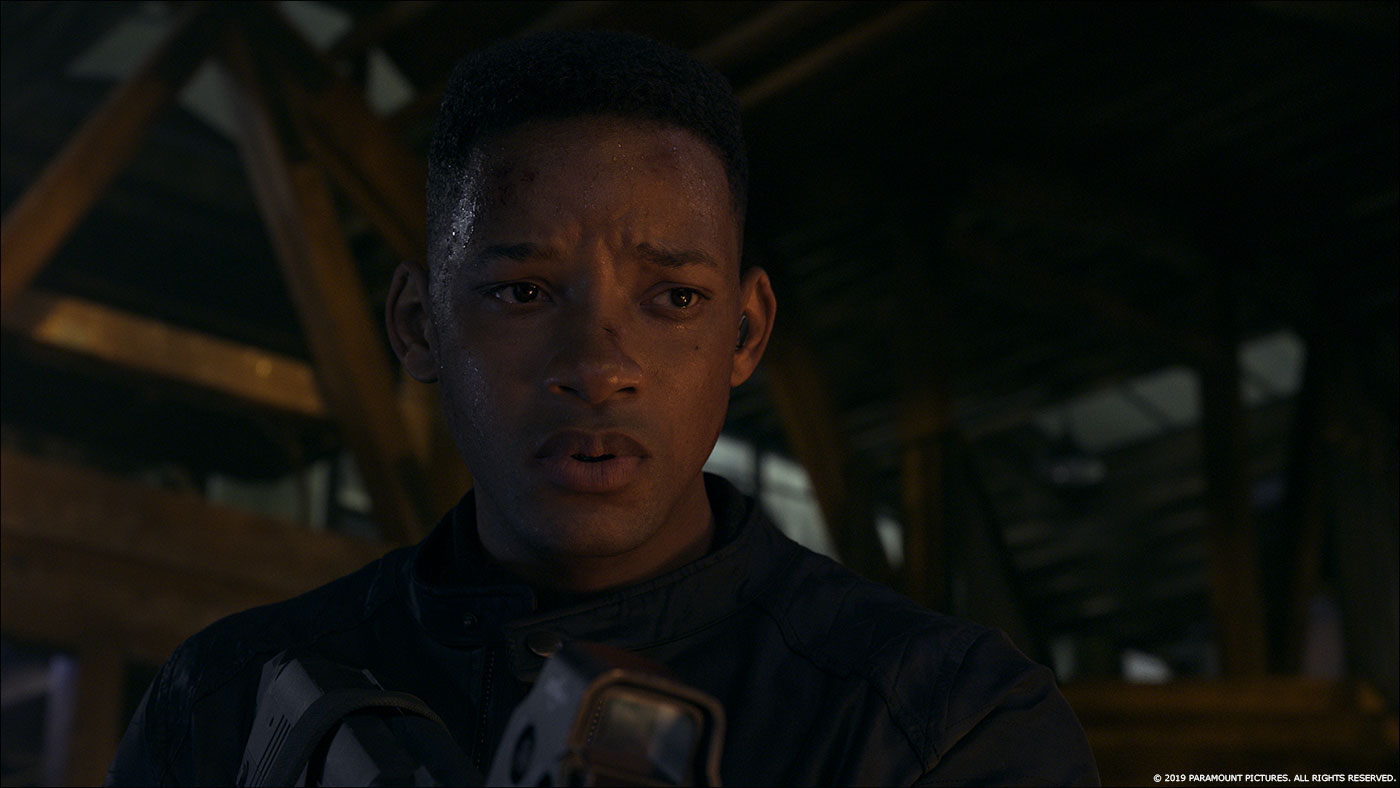

I love the sequence in the tunnel where Henry tells Junior he is a clone, it has some beautiful subtle performance from Will.

What is your best memory on this show?

Working with Ang Lee. He is truly a fantastic director (we all know that), but he is also a truly nice human being.

How long have you worked on this show?

Just over two years.

What’s the VFX shots count?

Weta worked on just under 600 shots.

What was the size of your team?

At any one time, there were around 200-300 people working on the show, but over the course of the show 600 people worked on it.

What is your next project?

It is also interesting, that is all I can say about it at this time ?

A big thanks for your time.

WANT TO KNOW MORE?

Weta Digital: Dedicated page about GEMINI MAN on Weta Digital website.

© Vincent Frei – The Art of VFX – 2019

woooow